-

What is Oracle RMAN backup?

-

Why choose AWS S3 for Oracle backups?

-

Method 1: Using Oracle Secure Backup Cloud Module for RMAN backup to AWS S3

-

Method 2: Using AWS CLI scripts for RMAN backup to AWS S3

-

Key Considerations When Backing Up Production Databases To Amazon S3

-

How can you back up Oracle databases to AWS S3 with Vinchin?

-

Oracle RMAN Backup To AWS S3 FAQs

-

Conclusion

Backing up your Oracle database to the cloud is now a common practice for many organizations. Amazon S3 offers a reliable, scalable, and cost-effective storage solution for critical data protection needs. But how do you connect Oracle RMAN to AWS S3? In this article, we’ll walk through the main methods for oracle rman backup to aws s3, explain their pros and cons, highlight key considerations for production environments, and show you how to get started step by step.

What is Oracle RMAN backup?

Oracle Recovery Manager (RMAN) is Oracle’s built-in tool for backing up and restoring databases. RMAN automates backup tasks so administrators can focus on higher-level planning rather than manual scripting. It manages backup metadata internally and supports full backups (entire database), incremental backups (only changed blocks), and archived log backups (transaction logs). You can write these backups to disk or tape by default—or extend them to cloud storage using plugins or modules provided by Oracle.

RMAN also verifies backup integrity during creation and restore operations. This makes it an essential part of any disaster recovery plan involving Oracle databases.

Why choose AWS S3 for Oracle backups?

Amazon S3 is a popular choice for database backups because it provides high durability—designed for 99.999999999% object durability—and virtually unlimited storage capacity that grows with your needs. With S3’s flexible lifecycle management policies, you can automate retention rules so old backups move automatically into lower-cost storage classes like Glacier Instant Retrieval or Deep Archive.

Storing oracle rman backup to aws s3 means your data stays offsite yet accessible from anywhere with proper credentials—a major advantage over traditional tape libraries or local disks that are vulnerable to physical disasters or thefts.

S3 integrates tightly with other AWS services such as CloudWatch (for monitoring), IAM (for access control), KMS (for encryption), making it easy to automate workflows while keeping your data secure at every stage.

This combination provides a secure, manageable, and cost-efficient backup destination that meets both compliance requirements and business continuity goals.

Method 1: Using Oracle Secure Backup Cloud Module for RMAN backup to AWS S3

The Oracle Secure Backup (OSB) Cloud Module is Oracle’s official solution for sending RMAN backups directly from your database server into Amazon S3 buckets—no need for extra scripts or temporary disk space if configured correctly.

Before starting this method of oracle rman backup to aws s3:

Make sure your version of Oracle Database is compatible with the OSB Cloud Module release you download.

You need an active AWS account with access rights to create/manage S3 buckets.

Set up an IAM user or role granting permissions (s3:PutObject, s3:GetObject, s3:ListBucket, s3:DeleteObject) on your chosen bucket.

Install Java 1.7+ on your server running Oracle Database.

Register an OTN account if not already done.

Step 1: Prepare your environment

First create an S3 bucket dedicated solely for database backups—this helps organize objects by workload type or retention policy later on.

Set up IAM credentials securely:

For EC2-based deployments use IAM roles instead of long-lived access keys whenever possible; this reduces risk if credentials leak.

If using static keys store them only in protected directories accessible by the

oracleuser.

Download osbws_installer.zip from Oracle’s download page.

Step 2: Create the wallet directory

On your server run:

mkdir $ORACLE_HOME/dbs/osbws_wallet

This directory stores encrypted credentials used by OSB when connecting RMAN jobs directly into Amazon S3 endpoints.

Step 3: Install the OSB Cloud Module

Extract then run installer:

cd $ORACLE_HOME/dbs/osbws_wallet unzip osbws_installer.zip java -jar osbws_install.jar -AWSID <your-access-key> -AWSKey <your-secret-key> \ -walletDir $ORACLE_HOME/dbs/osbws_wallet -libDir $ORACLE_HOME/lib \ -location <aws-region> -awsEndPoint s3.<aws-region>.amazonaws.com \ -otnUser <your-otn-username> -otnPass <your-otn-password>

If running inside EC2 use -IAMRole <role-name> instead of explicit keys—the module retrieves temporary tokens automatically via instance metadata service.

After installation verify both library file (libosbws.so) in $ORACLE_HOME/lib and configuration file (osbws<SID>.ora) exist:

ls -la $ORACLE_HOME/lib/libosbws.so ls -la $ORACLE_HOME/dbs/osbws*

If either file is missing double-check installer output logs; common issues include incorrect permissions or missing Java dependencies.

Step 4: Configure RMAN channels

Tell RMAN where its new media management library lives:

RMAN> CONFIGURE CHANNEL DEVICE TYPE SBT PARMS 'SBT_LIBRARY=/u01/app/oracle/product/19.0/db_1/lib/libosbws.so,SBT_PARMS=(OSB_WS_PFILE=/u01/app/oracle/product/19.0/db_1/dbs/osbws<SID>.ora)';

Replace paths/SID as appropriate per environment setup instructions above.

Step 5: Run your first cloud-native backup

Now launch a test job:

RMAN> RUN {

ALLOCATE CHANNEL c1 DEVICE TYPE SBT;

BACKUP DATABASE PLUS ARCHIVELOG;

RELEASE CHANNEL c1;

}Check job output carefully—look out for errors related to network timeouts or permission denials which often indicate misconfigured IAM policies or firewall rules blocking outbound HTTPS traffic toward Amazon endpoints.

Once complete log into AWS Console > open your designated bucket > confirm new objects appear matching expected timestamp/prefix pattern set by RMAN job parameters!

Troubleshooting Tips

If jobs fail mid-transfer:

Check free memory/disk space on source host—Java-based modules may require additional heap allocation under heavy load.

Review VPC security group settings if running inside private subnets; allow outbound HTTPS traffic toward regional

amazonaws.comdomains used by S3 API endpoints.Use

rman debug tracemode when diagnosing persistent failures; logs often reveal subtle typos in parameter files or expired OTN passwords causing authentication errors.

Advanced Considerations

For large-scale production workloads consider parallelizing channels within RMAN scripts—for example allocate multiple channels per device type—to maximize throughput between source host(s) and remote region endpoint(s). Monitor transfer speeds using both OS-level tools (iftop, netstat) plus native AWS metrics via CloudWatch dashboards tracking outgoing bandwidth utilization.

Method 2: Using AWS CLI scripts for RMAN backup to AWS S3

Some teams prefer not installing extra plugins/modules—especially when working across mixed environments where direct integration isn’t feasible due either licensing restrictions or legacy system constraints. In these cases you can back up locally then upload files manually using command-line utilities like AWS CLI—a flexible approach suitable even outside standard Linux distributions supported officially by vendor modules.

Before choosing this method remember:

You must have enough local disk space available at all times equal at least one full copy of largest anticipated database snapshot plus archive logs generated during window between upload cycles!

Uploading large files over slow WAN links may take hours—or longer depending on bandwidth caps imposed by corporate firewalls/internet providers.

There’s always some risk that system crashes after local dump completes but before upload finishes could leave recent changes unprotected until next scheduled cycle runs successfully.

Step 1: Install & configure AWS CLI

Install latest version following official guide:

aws configure

Enter Access Key ID / Secret Access Key / Default Region / Output format as prompted.

Step 2: Run regular disk-based RMAN jobs

Back up entire DB plus archive logs locally:

RMAN> BACKUP DATABASE PLUS ARCHIVELOG FORMAT '/backup/rman/%U.bkp';

Choose /backup/rman path based on available free space—ideally separate mount point dedicated solely toward staging transient objects prior upload phase.

Step 3: Upload files efficiently using sync command

Instead of copying everything blindly each time use:

aws s3 sync /backup/rman/ s3://your-s3-bucket/

This transfers only new/delta files since last successful run reducing unnecessary bandwidth consumption especially important over metered connections!

Step 4: Automate everything safely

Wrap above logic inside shell script adding error checking/logging features such as:

#!/bin/bash LOGFILE=/var/log/rman_s3_backup.log echo "$(date): Starting nightly oracle rman backup" >> $LOGFILE rman target / @/scripts/full_backup.rman >> $LOGFILE 2>&1 if [ $? -eq 0 ]; then echo "$(date): Backup succeeded – uploading…" >> $LOGFILE aws s3 sync /backup/rman/ s3://your-s3-bucket/ --delete >> $LOGFILE 2>&1 # Optionally remove old local copies here else echo "$(date): Backup failed!" >> $LOGFILE # Send alert email/SNS notification here fi

Schedule execution via cron (crontab -e) ensuring regular intervals match business RPO/RTO targets.

Step 5: Restore process overview

To recover simply reverse direction:

aws s3 sync s3://your-s3-bucket/ /backup/rman/

Then invoke standard restore commands within RMAN referencing downloaded files as input sources.

Intermediate & Advanced Tips

Monitor ongoing costs closely! Frequent uploads/downloads across regions incur data transfer fees beyond basic storage charges listed publicly—review monthly bills regularly via Cost Explorer dashboard inside AWS Console adjusting lifecycle policies accordingly if needed.

Consider encrypting sensitive dumps before transmission using either native operating system utilities (openssl, gpg) OR enable default encryption at rest within target bucket properties itself under Management tab > Default Encryption settings menu option.

Limitations & Best Practices

While simple this approach does not guarantee atomicity between dump completion/upload finish events—a crash mid-process could leave gaps unless mitigated through careful scripting/checkpoint logic embedded within automation routines described above.

Key Considerations When Backing Up Production Databases To Amazon S3

Choosing between direct plugin integration versus manual scripting depends largely upon scale/security/compliance requirements unique per organization—but several universal factors apply regardless which path selected:

Cost Optimization: Take advantage of tiered pricing models offered natively within Amazon ecosystem—for example transition older snapshots automatically from Standard class down toward Glacier Instant Retrieval after X days/weeks/months using Lifecycle Rules defined at bucket level interface online.

Security & Compliance: Always prefer short-lived IAM roles assigned dynamically wherever possible rather than hardcoded static secrets stored locally—even better restrict allowed actions tightly via custom policy documents limiting scope strictly necessary permissions only! Enable encryption everywhere whether managed client-side through application code OR enforced transparently server-side leveraging built-in KMS integrations available out-of-the-box today.

Performance Monitoring: Track end-to-end latency/bandwidth utilization actively during initial rollout phases establishing baseline expectations early then adjust concurrency levels/chunk sizes accordingly based upon observed bottlenecks reported back through both native OS counters AND external telemetry feeds aggregated centrally inside CloudWatch dashboards maintained routinely thereafter.

Validation Testing: Remember—the only good backup is one proven restorable! Schedule periodic drills restoring random samples onto isolated test/dev sandboxes validating both procedural accuracy AND underlying media integrity leveraging built-in VALIDATE RESTORE commands provided natively within modern versions shipped since Oracle Database v12c onward.

How can you back up Oracle databases to AWS S3 with Vinchin?

Transitioning from manual methods, organizations seeking enterprise-grade simplicity should consider Vinchin Backup & Recovery—a professional data protection solution for Oracle 10g, 11g/11g R2, 12c, 18c, 19c, 21c, Oracle RAC, MySQL, SQL Server, MariaDB, PostgreSQL, PostgresPro, and TiDB.

Vinchin Backup & Recovery delivers batch database backup capabilities, granular data retention policies including GFS retention options, cloud/tape archiving integration with scheduled automation, WORM protection against ransomware alteration attempts, and thorough recovery verification via SQL scripts.

The intuitive web console ensures ease-of-use throughout the workflow. To perform an Oracle rman backup to AWS s three with Vinchin Backup & Recovery typically involves four steps:

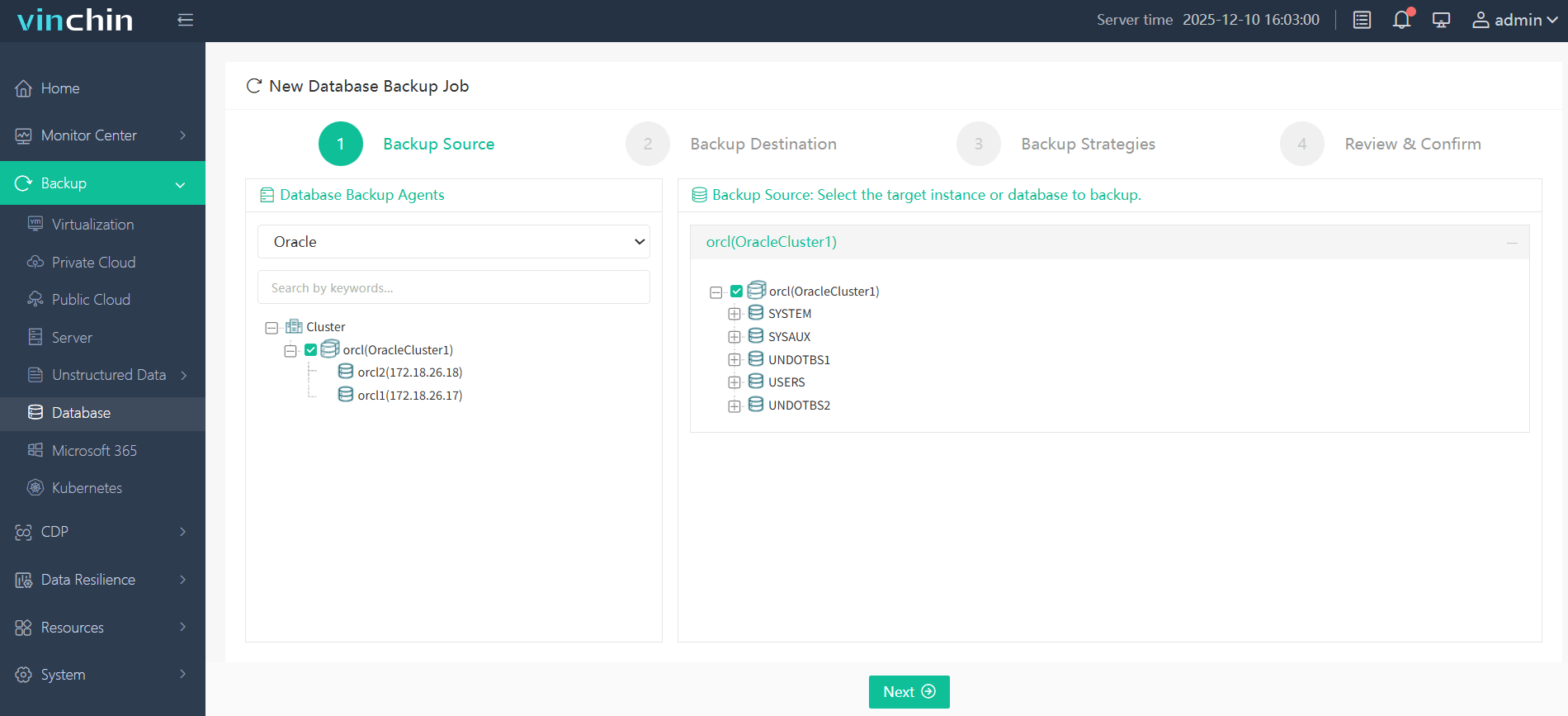

Step 1. Select the Oracle database to backup

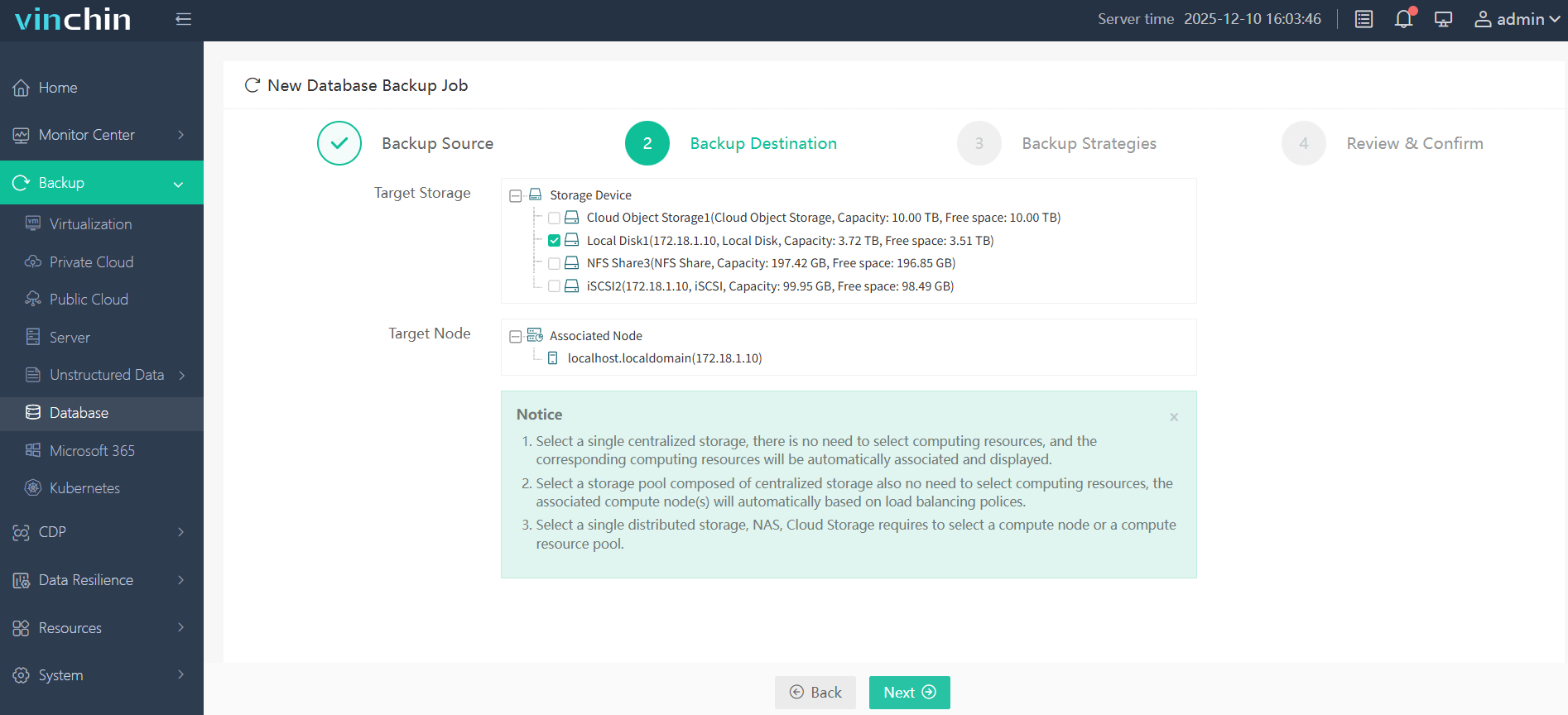

Step 2. Choose AWS S3 as the backup storage

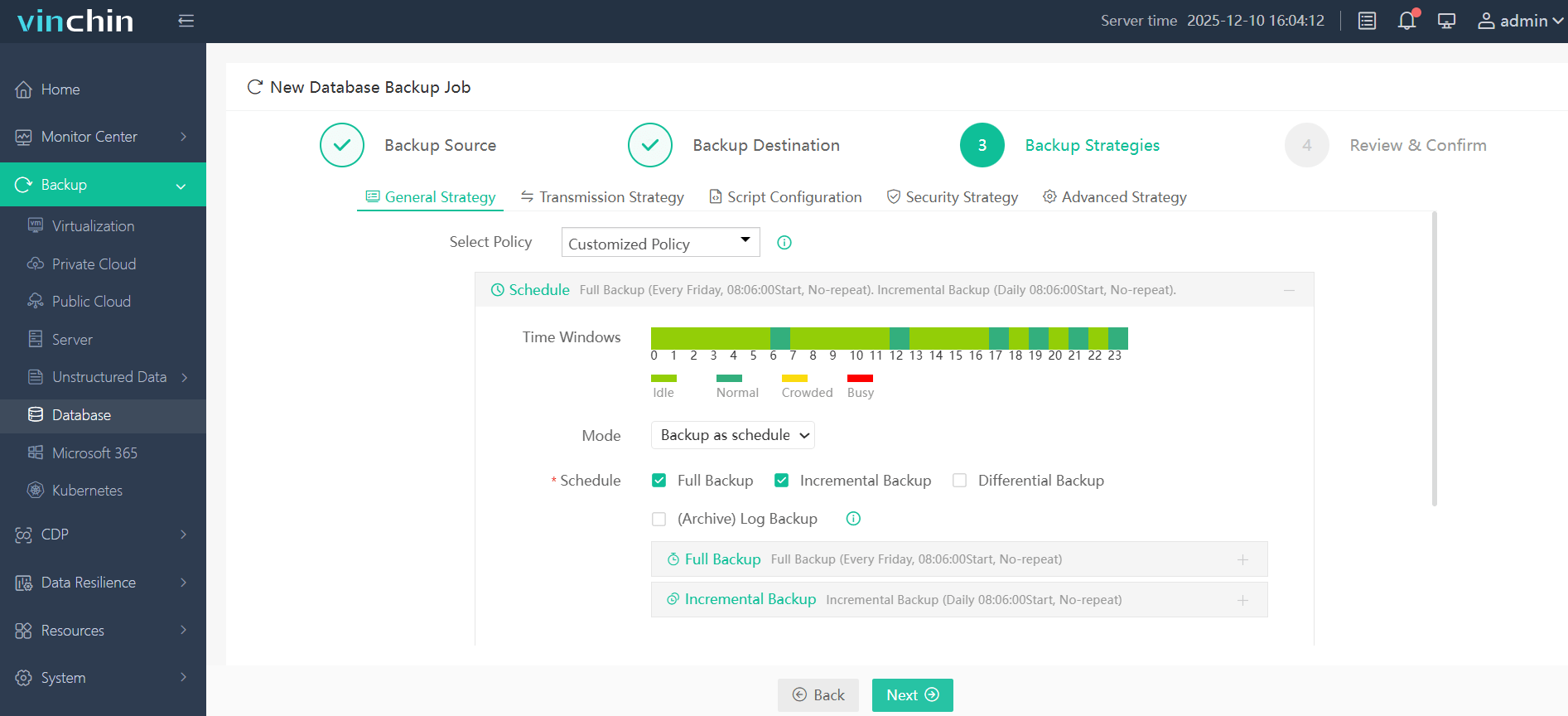

Step 3. Define the backup strategy

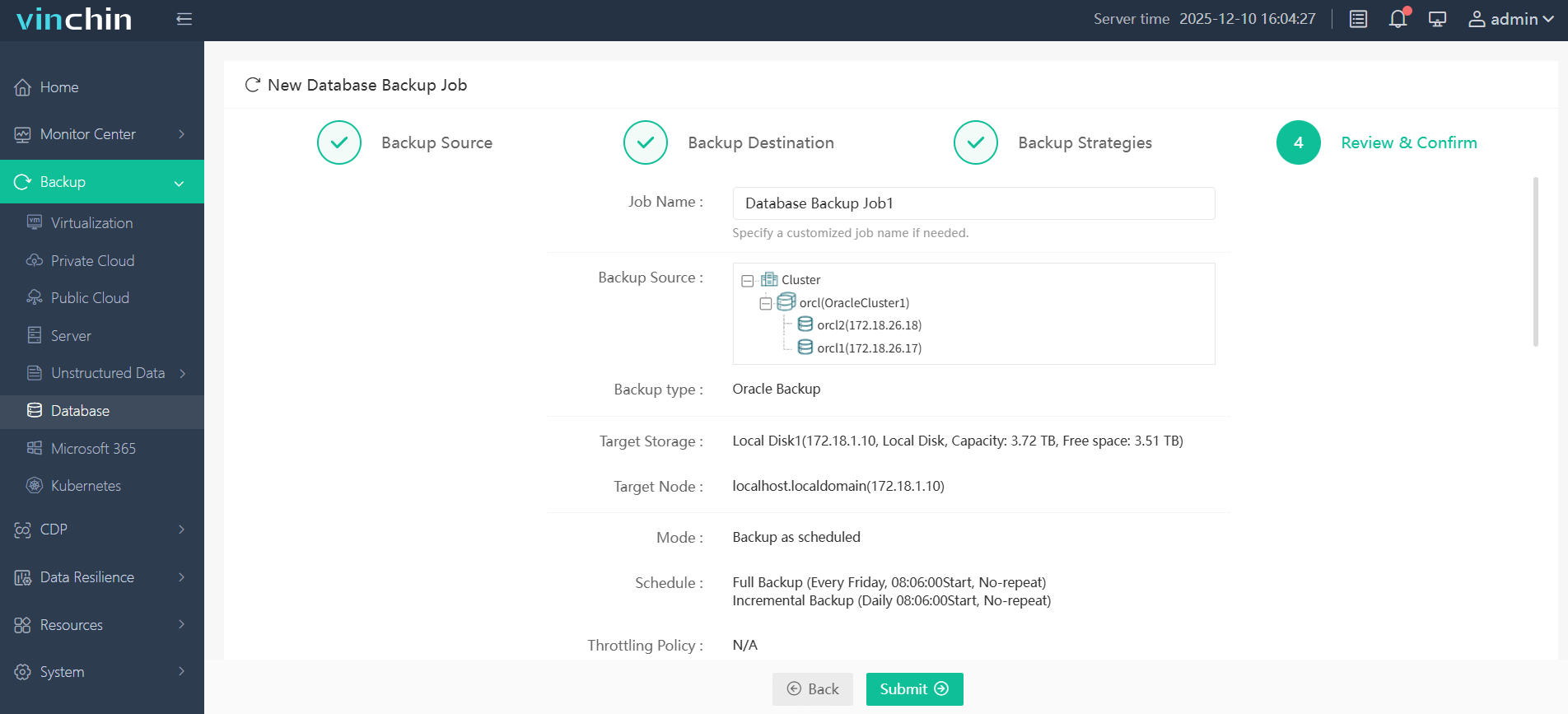

Step 4. Submit the job

Vinchin Backup & Recovery enjoys global recognition among enterprise users thanks to its strong customer base and top industry ratings. Experience all features free for 60 days—click below to start protecting your critical databases today!

Oracle RMAN Backup To AWS S3 FAQs

Q1: Can I encrypt my oracle rman backups stored in aws s three?

A1: Yes; enable encryption in RMAN before backing up or activate default encryption settings in your target Amazon S three bucket configuration panel.

Q2: How do I schedule automatic uploads after each nightly oracle rman disk dump?

A2: Place both rman commands followed by aws cli sync/upload calls inside one shell script then add script path into CRONTAB scheduler entry matching desired frequency window size requirements exactly as needed per SLA/RPO objectives set internally beforehand.

Q3: What should I do if my daily upload fails due network timeout?

A3: Check internet connectivity first then review error messages logged during last failed attempt; retry manually once connection restored ensuring no partial/corrupted objects left behind in destination folder/bucket tree structure visible online immediately afterward.

Conclusion

Backing up oracle databases directly into amazon s three delivers robust security scalability plus cost savings compared older legacy approaches relying purely upon tapes/disks alone today worldwide alike! Whether leveraging official plugin modules manual scripting routines—or choosing fully integrated solutions like Vinchin—you gain peace mind knowing mission-critical assets remain safe recoverable anytime disaster strikes unexpectedly tomorrow morning too!

Share on: