-

What Is Securing Kubernetes?

-

Why Securing Kubernetes Matters?

-

How to Implement RBAC for Securing Kubernetes?

-

How to Apply Network Policies in Kubernetes?

-

How to Use Image Scanning Tools for Securing Kubernetes?

-

Protecting Your Clusters with Vinchin Backup & Recovery

-

Securing Kubernetes FAQs

Kubernetes powers many of today’s critical business applications. But as its adoption grows, so do the risks—recent breaches have shown that attackers actively target misconfigured clusters Securing Kubernetes is not just a technical task—it’s essential to protect your data and reputation. In this guide, we’ll explore what securing Kubernetes means, why it matters now more than ever, and how you can take practical steps to safeguard your environment.

What Is Securing Kubernetes?

Securing Kubernetes means defending your clusters against unauthorized access, misconfigurations, and vulnerabilities at every layer. The Cloud Native Computing Foundation (CNCF) describes this using the “4C” model: Cloud (infrastructure), Cluster (control plane/nodes), Container (images/runtimes), and Code (application logic). Each layer needs its own controls:

At the cloud level, secure your infrastructure with strong identity management and network segmentation.

For clusters themselves, harden control planes by limiting API server exposure and keeping nodes patched.

Containers require verified images free from known vulnerabilities or malware.

Secure code practices help prevent injection attacks or accidental secret leaks.

Securing Kubernetes is an ongoing process involving access control policies like RBAC, network segmentation through policies, vulnerability management via scanning tools—and constant monitoring for threats.

Why Securing Kubernetes Matters?

Kubernetes offers flexibility but can expose you to risk if not secured properly. A single weak configuration might let attackers steal sensitive data or disrupt services—a reality highlighted by several high-profile incidents. If RBAC is too permissive or missing entirely, users may gain admin rights they shouldn’t have. Without network policies in place, a compromised pod could attack others within your cluster.

Regulations such as GDPR or HIPAA demand strict security controls around personal data processing; failure here can mean heavy fines or lost trust from customers. Ultimately, securing Kubernetes protects both your business operations and compliance posture.

How to Implement RBAC for Securing Kubernetes?

Role-Based Access Control (RBAC) lets you define who can perform which actions inside your cluster—enforcing least privilege across users and workloads.

Start by ensuring RBAC is enabled on your API server; most distributions do this by default but check with kubectl api-versions | grep rbac.authorization.k8s.io. If needed during setup use the flag --authorization-mode=RBAC.

To create a read-only Role in the “default” namespace:

apiVersion: rbac.authorization.k8s.io/v1 kind: Role metadata: namespace: default name: pod-reader rules: - apiGroups: [""] resources: ["pods"] verbs: ["get", "watch", "list"]

Bind this Role to a user named “jane”:

apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: read-pods namespace: default subjects: - kind: User name: jane apiGroup: rbac.authorization.k8s.io roleRef: kind: Role name: pod-reader apiGroup: rbac.authorization.k8s.io

For applications running inside pods use ServiceAccounts instead of users—create them with kubectl create serviceaccount my-app then bind appropriate roles.

Always test permissions before going live using kubectl auth can-i <verb> <resource> --as <user>; for example:

kubectl auth can-i delete pods --as jane --namespace=default

Be careful not to grant broad permissions like "*" on all resources unless absolutely necessary—this opens doors for abuse if credentials leak. Regularly audit roles with kubectl get roles --all-namespaces and review bindings to catch privilege creep over time.

How to Apply Network Policies in Kubernetes?

By default all pods in a cluster can talk freely—a risky setup if any single pod gets compromised. Network Policies let you restrict traffic between pods based on labels or namespaces.

First confirm that your CNI plugin supports Network Policies (calico, cilium, etc.). Then start with a simple policy that only allows backend pods to reach frontend pods within the same namespace:

apiVersion: networking.k8s.io/v1 kind: NetworkPolicy metadata: name: allow-backend-to-frontend namespace: default spec: podSelector: matchLabels: role: frontend ingress: - from: - podSelector: matchLabels: role: backend

Apply it using:

kubectl apply -f networkpolicy.yaml

To enforce zero-trust networking begin with a default deny policy:

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: deny-all

namespace: default

spec:

podSelector:{}

policyTypes:["Ingress","Egress"]Then explicitly allow required connections only—for example between certain namespaces or external IP ranges as needed.

Test enforcement by trying connections between different pods (kubectl exec) before moving workloads into production. If something breaks unexpectedly check labels carefully—policies rely on accurate labeling strategy across deployments!

Monitor active policies with kubectl get networkpolicies --all-namespaces and troubleshoot issues using logs from your CNI plugin’s controller components.

How to Use Image Scanning Tools for Securing Kubernetes?

Container images often contain outdated libraries or hidden malware—attackers exploit these weaknesses if left unchecked! Image scanning helps spot problems before deployment.

Install an open-source scanner such as Trivy following instructions from its official documentation. Scan local images like this:

trivy image nginx:latest

The output lists vulnerabilities along with severity ratings; fix critical ones before pushing images further down the pipeline.

Integrate scanning into CI/CD workflows so builds fail automatically when high-risk issues appear—add steps in Jenkins/GitLab pipelines calling Trivy directly after building each image.

For even stronger protection consider enforcing scans at admission time using Admission Controllers configured via ValidatingWebhookConfiguration resources; these block unscanned images from running altogether until approved results are available.

Sign trusted images cryptographically using tools like cosign then verify signatures during deployment—this prevents tampering between build systems and production clusters.

Finally always pull images from trusted registries—not random public sources—and prefer immutable tags over floating ones like “latest.”

Rescan stored images regularly since new CVEs emerge daily; automate rescans where possible through registry hooks or scheduled jobs within your CI/CD platform!

Protecting Your Clusters with Vinchin Backup & Recovery

Beyond implementing robust security controls, safeguarding data integrity requires reliable backup solutions tailored specifically for containerized environments. Vinchin Backup & Recovery stands out as an enterprise-level solution designed exclusively for comprehensive Kubernetes backup needs. It delivers advanced features including full/incremental backups across clusters, namespaces, applications, PVCs, fine-grained restore options at multiple levels, cross-cluster/cross-version recovery capabilities—even migration between different storage backends—and intelligent automation of backup strategies. These capabilities ensure operational resilience while simplifying compliance requirements across complex hybrid infrastructures.

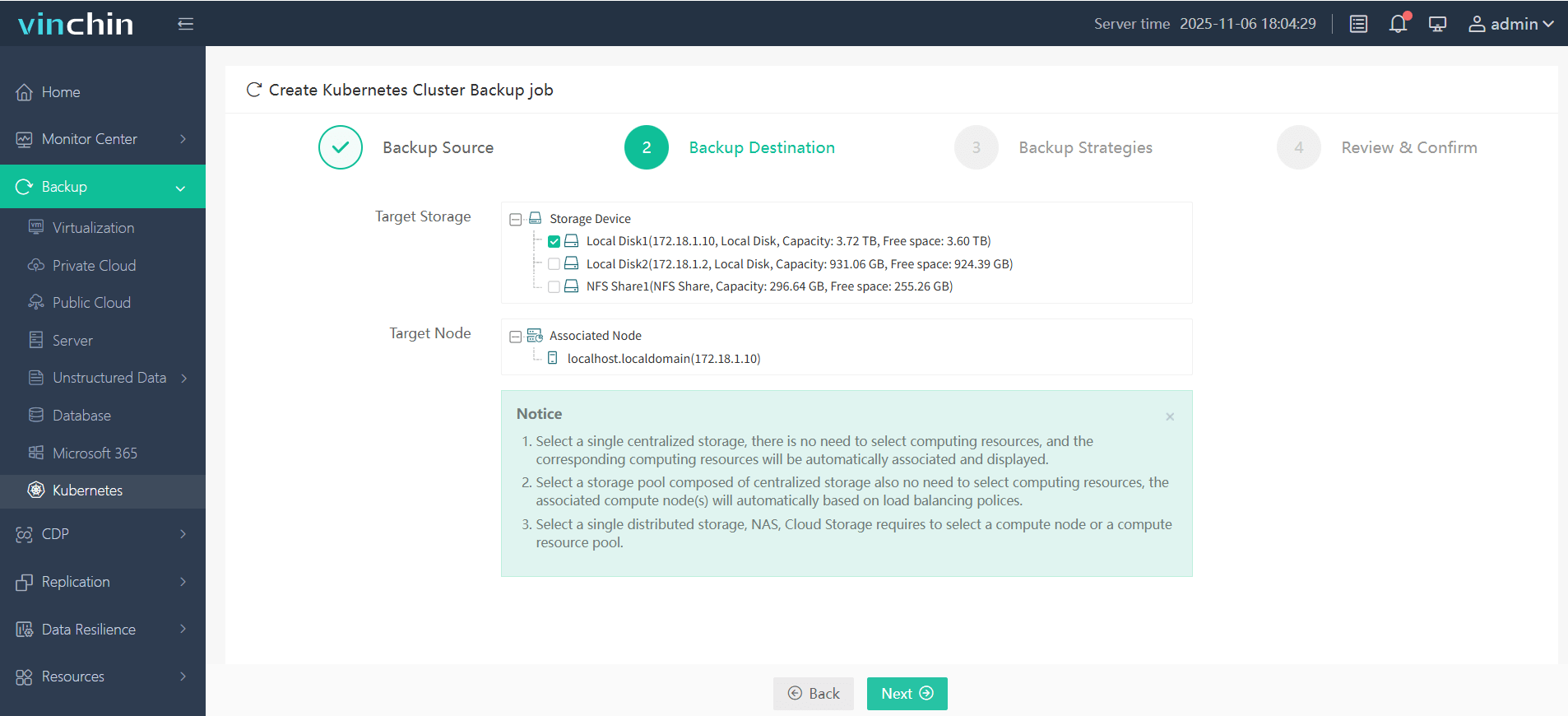

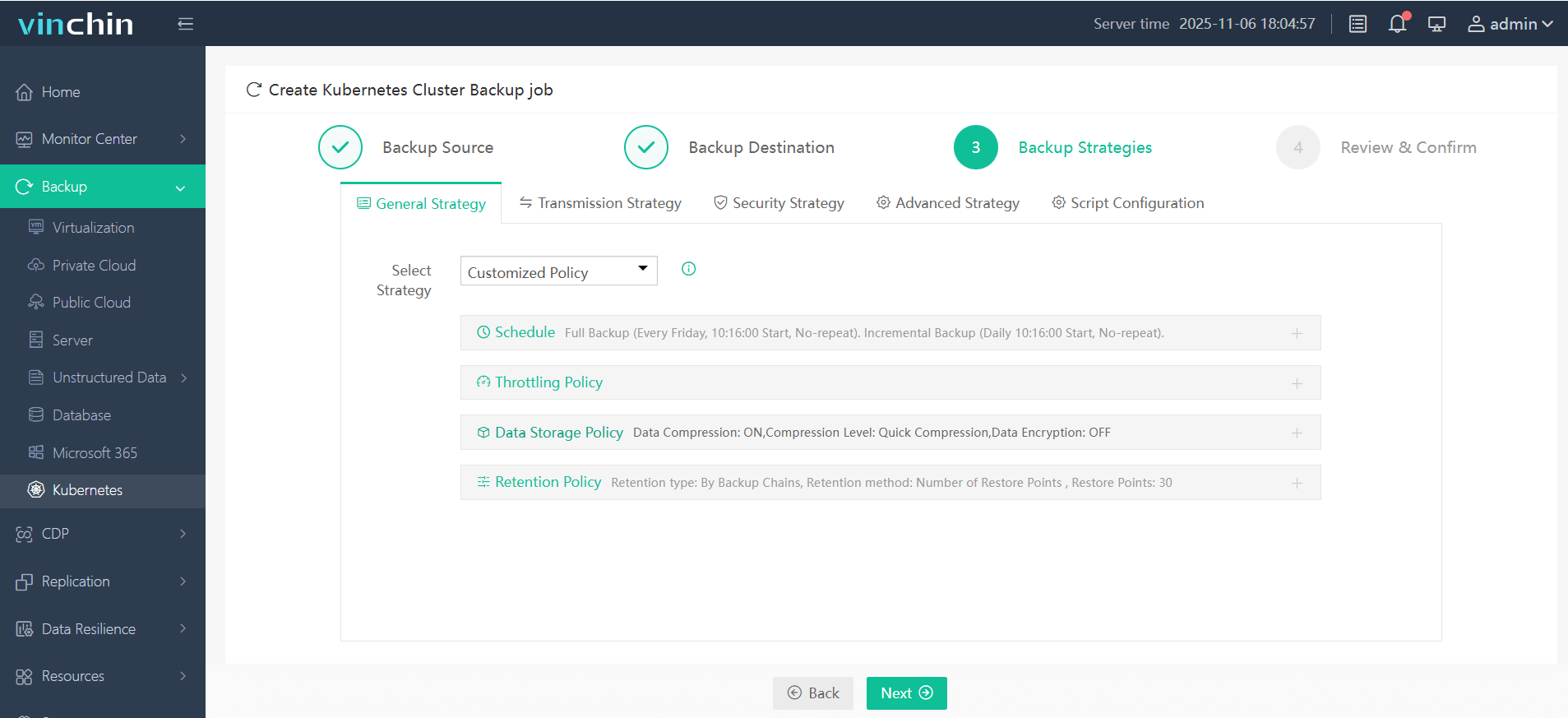

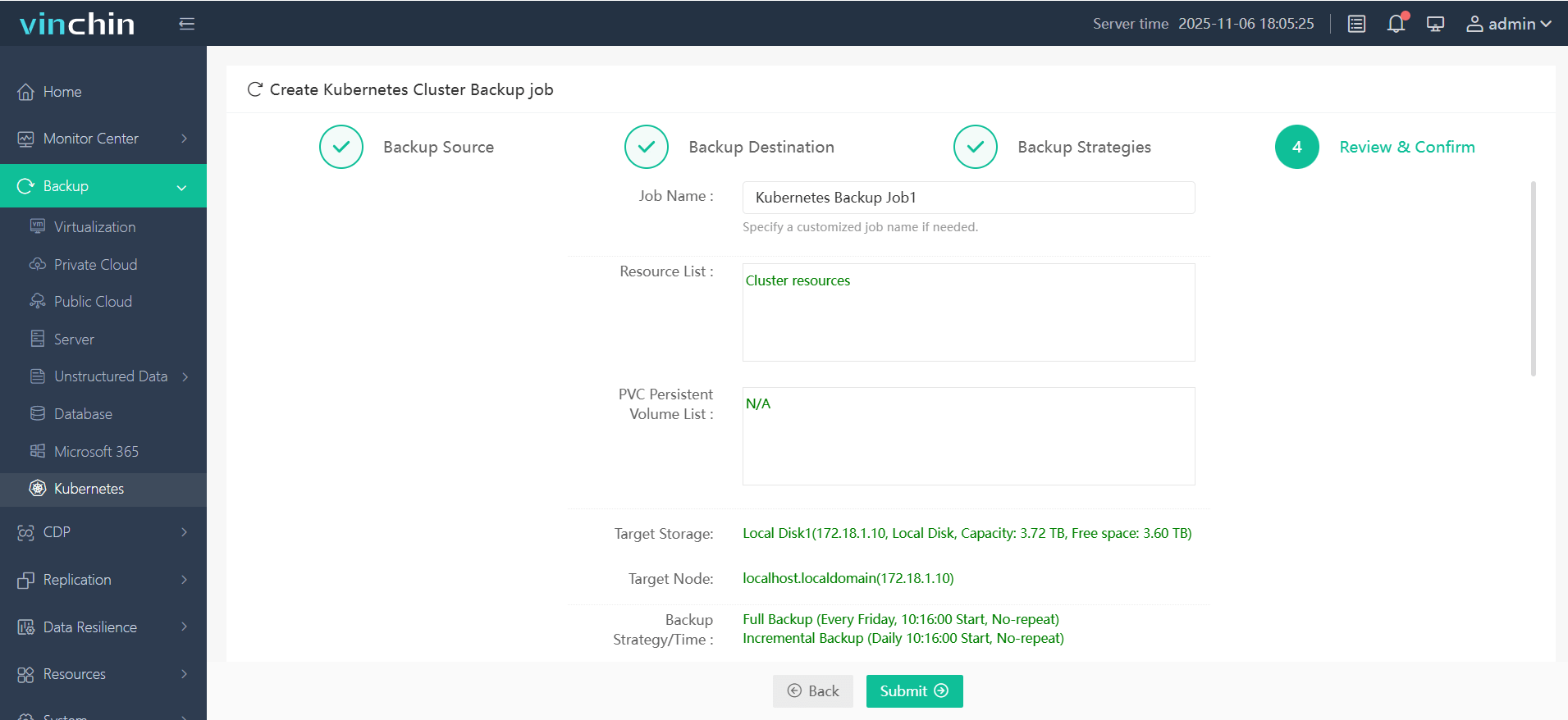

The intuitive web console of Vinchin Backup & Recovery streamlines backup operations into four straightforward steps tailored for Kubernetes environments:

Step 1. Select the backup source

Step 2. Choose the backup storage

Step 3. Define the backup strategy

Step 4. Submit the job

Trusted globally by enterprises of all sizes—with top industry ratings—Vinchin Backup & Recovery offers a fully featured free trial valid for up to sixty days; click below to experience powerful protection firsthand!

Securing Kubernetes FAQs

Q1: How do I monitor containers at runtime without affecting performance?

A1: Deploy lightweight agents such as Falco configured with custom rules—they alert on suspicious activity while minimizing overhead compared to full tracing solutions.

Q2: What's the best way to enforce Pod Security Standards?

A2: Enable the built-in PodSecurity admission controller and apply the appropriate levels (“restricted”, “baseline”, etc.) to each namespace based on the sensitivity of the workloads. You can find the recommended profiles and usage guidelines in the official Kubernetes documentation.

Share on: