-

What Is AWS Oracle RDS?

-

What Is RMAN Backup?

-

Why Use S3 for Backups?

-

Prerequisites: Setting Up IAM Roles and Policies

-

How to Perform AWS Oracle RDS RMAN Backup to S3 Natively?

-

How to Use Data Pump for AWS Oracle RDS Backup to S3 Integration?

-

Introducing Vinchin Backup & Recovery

-

AWS Oracle RDS RMAN Backup to S³ FAQs

-

Conclusion

Are you searching for a dependable way to back up your AWS Oracle RDS database using RMAN and store those backups in Amazon S3? Many operations administrators face this challenge when they need compliance or long-term retention. In this guide, we’ll break down every step of the process. We’ll explain key concepts, show you how to use both native RMAN and Data Pump methods, discuss prerequisites, highlight common pitfalls, and help you get your Oracle RDS backups safely into S3.

What Is AWS Oracle RDS?

Amazon Relational Database Service (RDS) for Oracle is a managed service that lets you run Oracle databases in the AWS cloud. With RDS, you do not manage hardware or patching—AWS handles much of that work. Still, advanced backup needs like long-term retention or offsite storage require using Oracle’s native tools through AWS-defined procedures since direct OS access is not available.

What Is RMAN Backup?

Oracle Recovery Manager (RMAN) is an integrated utility for backing up and restoring Oracle databases. It supports full, incremental, and archived log backups with features such as compression and encryption. In AWS RDS for Oracle environments, RMAN works through special stored procedures provided by AWS rather than direct command-line access. This means some features are limited compared to on-premises installations.

Why Use S3 for Backups?

Amazon S3 (Simple Storage Service) offers durable object storage at scale. Storing your Oracle backups in S3 gives you reliable long-term retention options along with easy access from anywhere. You can move older backups to lower-cost storage classes like S3 Glacier for further savings. S3 also integrates with AWS security controls and lifecycle policies—making it ideal for compliance needs or disaster recovery planning.

Prerequisites: Setting Up IAM Roles and Policies

Before starting any backup process from AWS Oracle RDS to S3 using RMAN or Data Pump, proper setup is essential. Without correct permissions or integration steps completed first, your backup jobs may fail.

First, create an Amazon S3 bucket dedicated to storing your database backups. Next, set up an IAM role that allows your RDS instance to upload files directly into this bucket. The IAM policy should grant at least these permissions: s3:PutObject, s3:GetObject, s3:ListBucket, s3:GetBucketLocation on your target bucket resources.

If server-side encryption with KMS keys is required by company policy or compliance rules, add relevant kms:Encrypt, kms:Decrypt, kms:GenerateDataKey actions as well.

After creating the role with its policy attached:

1. Go to the RDS Console

2. Select your target DB instance

3. Choose Modify

4. Under Manage IAM roles, select your new role

5. Click Continue then Apply immediately

It may take several minutes before changes take effect across all services.

How to Perform AWS Oracle RDS RMAN Backup to S3 Natively?

Backing up an Oracle RDS database directly into Amazon S3 using RMAN involves several coordinated steps unique to AWS environments.

Start by ensuring your environment meets all prerequisites described above—S3 bucket created; IAM role attached; integration enabled between RDS instance and Amazon S3.

Here’s how you perform a native backup:

First connect securely to your database using SQL*Plus or another compatible client tool.

Step 1: Create Directory Object

You must define a logical directory inside the database pointing where backup files will be staged:

EXEC rdsadmin.rdsadmin_util.create_directory(p_directory_name => 'BKP_DIR_STS');

This creates a directory object named BKP_DIR_STS within the managed file system of your RDS instance—not directly inside S3 yet.

Step 2: Set Archive Log Retention

Set how long archive logs should be kept before deletion:

EXEC rdsadmin.rdsadmin_util.set_configuration(name => 'archivelog retention hours', value => '48');

Adjust ‘48’ based on business requirements for point-in-time recovery windows.

Step 3: Run RMAN Backup Using Stored Procedure

AWS provides stored procedures under rdsadmin.rdsadmin_rman_util package designed specifically for managed environments:

EXEC rdsadmin.rdsadmin_rman_util.backup_database_s3( p_directory_name => 'BKP_DIR_STS', p_prefix => 'backup_202406', p_overwrite => true );

This command triggers an online backup of all datafiles plus archive logs if enabled—placing output files in BKP_DIR_STS directory first.

Step 4: Upload Backup Files from Directory Object into Amazon S3

Once files are staged locally within BKP_DIR_STS directory object area:

SELECT rdsadmin.rds_file_util.upload_to_s3( p_directory => 'BKP_DIR_STS', p_s3_bucket => '<your-s3-bucket-name>', p_s3_prefix => 'oracle-backups/' ) FROM dual;

Replace <your-s3-bucket-name> with actual bucket name assigned earlier; adjust prefix as needed for organizational structure within bucket folders.

This uploads all eligible files from local staging area into specified location inside Amazon S3 bucket via secure API calls handled internally by AWS infrastructure—no manual file transfer required!

Step 5: Automate Backups Using Scheduling Tools

For regular automated protection without manual intervention:

1. Wrap above SQL commands inside a PL/SQL procedure

2. Schedule execution using DBMS_SCHEDULER jobs if allowed by current account privileges

Alternatively,

Trigger external automation via Lambda functions calling these procedures through secure connections at desired intervals (daily/weekly).

Note that some scheduling features may be restricted depending on specific version of Aurora/RDS engine used; always consult latest AWS documentation.

Step 6: Set Up Notifications (Optional)

To receive alerts about job status:

1. Create an SNS topic

2. Configure notification triggers upon job completion/failure events

This helps ensure prompt response if issues arise during scheduled runs.

Step 7: Verify Success & Clean Up Old Files

Check contents of designated Amazon S3 bucket after each run—confirm presence of expected .bkp/.arc/.ctl files matching naming convention used above.

Monitor notifications regularly so failed jobs don’t go unnoticed.

Use lifecycle management policies within Amazon S3 console interface to automatically transition old backups into cheaper storage classes such as Glacier after defined periods—or delete them entirely when no longer needed according to company retention standards.

How to Use Data Pump for AWS Oracle RDS Backup to S3 Integration?

Sometimes exporting logical data structures instead of full physical images makes sense—for example during migrations or selective schema exports between test/dev/prod environments.

Here’s how Data Pump works together with Amazon S3:

Begin by confirming prerequisites are met—a dedicated export bucket exists; appropriate IAM role attached; integration enabled.

Connect securely via SQL*Plus or similar tool.

Step 1: Create Directory Object Pointing Toward Export Area

Define where dump (.dmp) files will be written prior uploading:

EXEC rdsadmin.rdsadmin_util.create_directory(p_directory_name => 'DATA_PUMP_DIR');

Step 2: Export Logical Objects Using Data Pump Utility

Run export command targeting chosen schemas/tablespaces/users etc., specifying output path as DATA_PUMP_DIR:

expdp admin/password@ORCL schemas=MYSCHEMA directory=DATA_PUMP_DIR dumpfile=mydumpfile.dmp logfile=export.log

This generates .dmp file(s) plus log output inside DATA_PUMP_DIR staging area.

Step 3: Upload Dump File(s) Into Your Designated Amazon S3 Bucket

Transfer exported dumps from local staging area into cloud storage using built-in procedure:

SELECT rdsadmin.rds_file_util.upload_to_s3( p_directory => 'DATA_PUMP_DIR', p_s3_bucket => '<your-s3-bucket-name>', p_s3_prefix => 'datapump_exports/' ) FROM dual;

Again replace placeholders appropriately based on environment specifics.

Step 4 (Optional): Restore Data From Cloud Storage When Needed

To restore exported objects elsewhere,

Download .dmp file(s) back out of designated folder within Amazon S³ onto target system,

Then use standard impdp utility referencing downloaded file(s).

Keep in mind that importing dumps back into another managed RDS instance often requires intermediate steps due limitations around direct OS-level access—you might need temporary EC2-based staging server running compatible version of standalone Oracle Database software.

Data Pump method excels at migrations involving only part of overall dataset—or when moving data between different regions/accounts/environments without copying entire physical image every time.

Introducing Vinchin Backup & Recovery

For organizations seeking streamlined enterprise-grade protection beyond native tools, Vinchin Backup & Recovery stands out as a professional solution supporting today’s leading databases—including robust coverage for Oracle alongside MySQL, SQL Server, MariaDB, PostgreSQL, PostgresPro, and TiDB platforms commonly found in hybrid cloud environments like AWS RDS deployments. Key features such as batch database backup scheduling, flexible data retention policies including GFS support, integrity check routines ensuring recoverability assurance, restore-to-new-server capability for rapid disaster recovery scenarios, and comprehensive cloud/tape archiving empower IT teams with reliable automation while maintaining compliance standards across diverse infrastructures—all accessible through one unified platform.

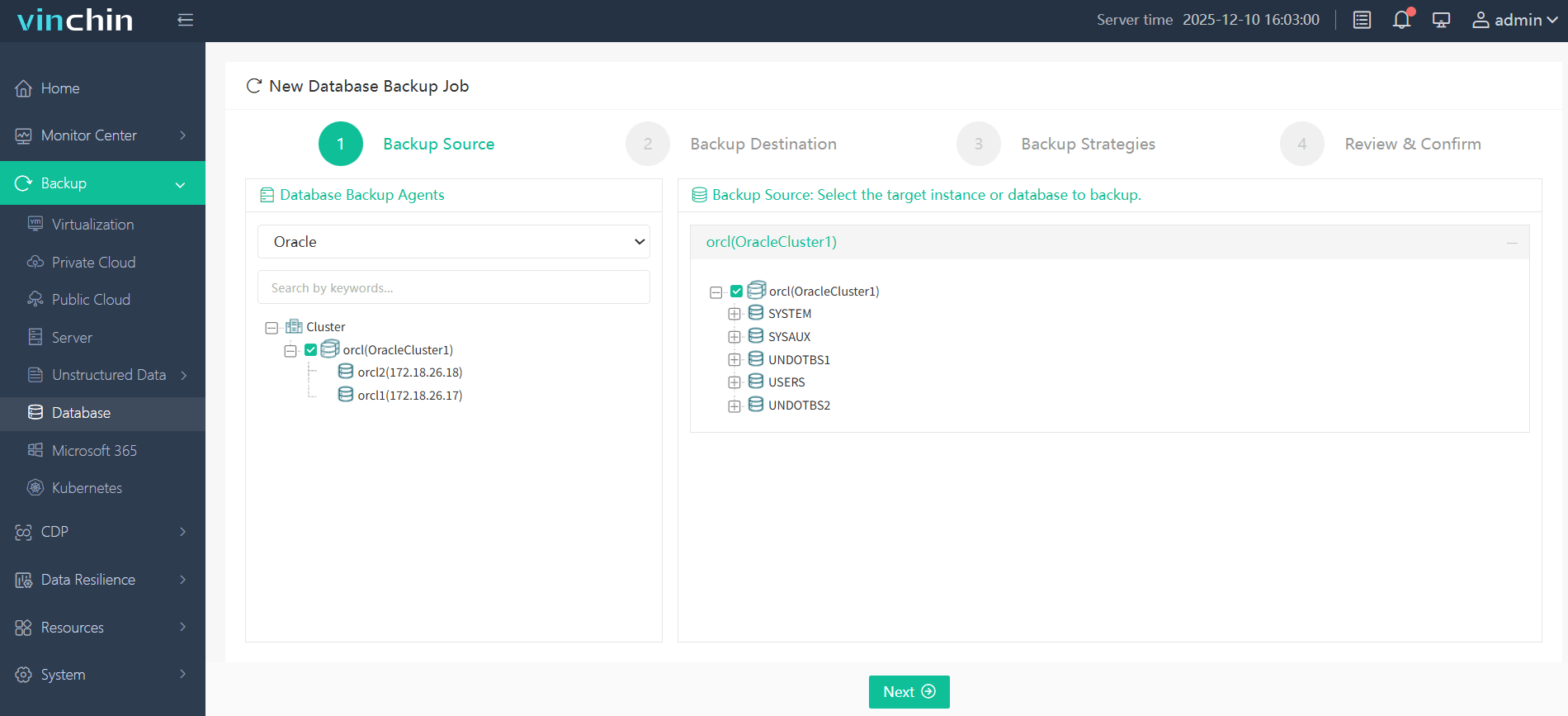

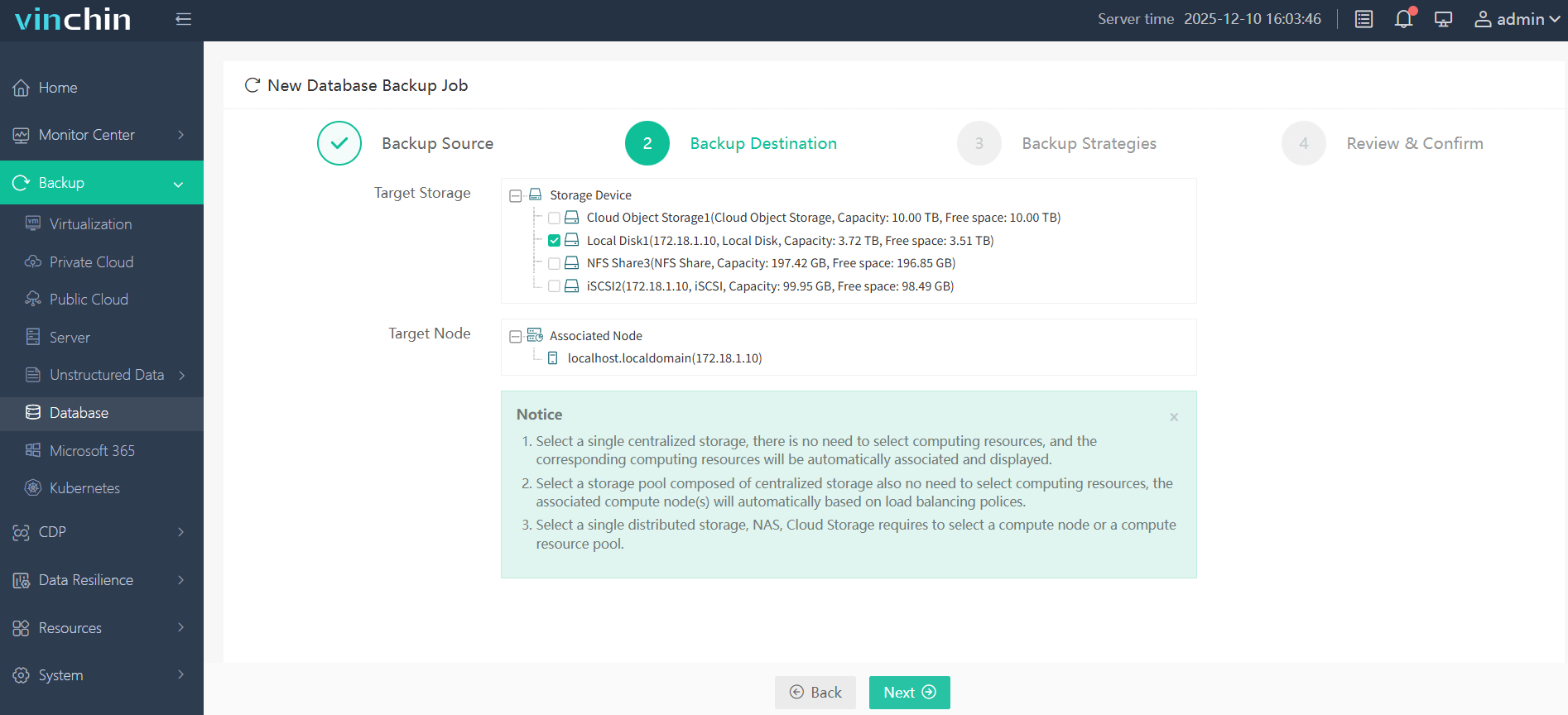

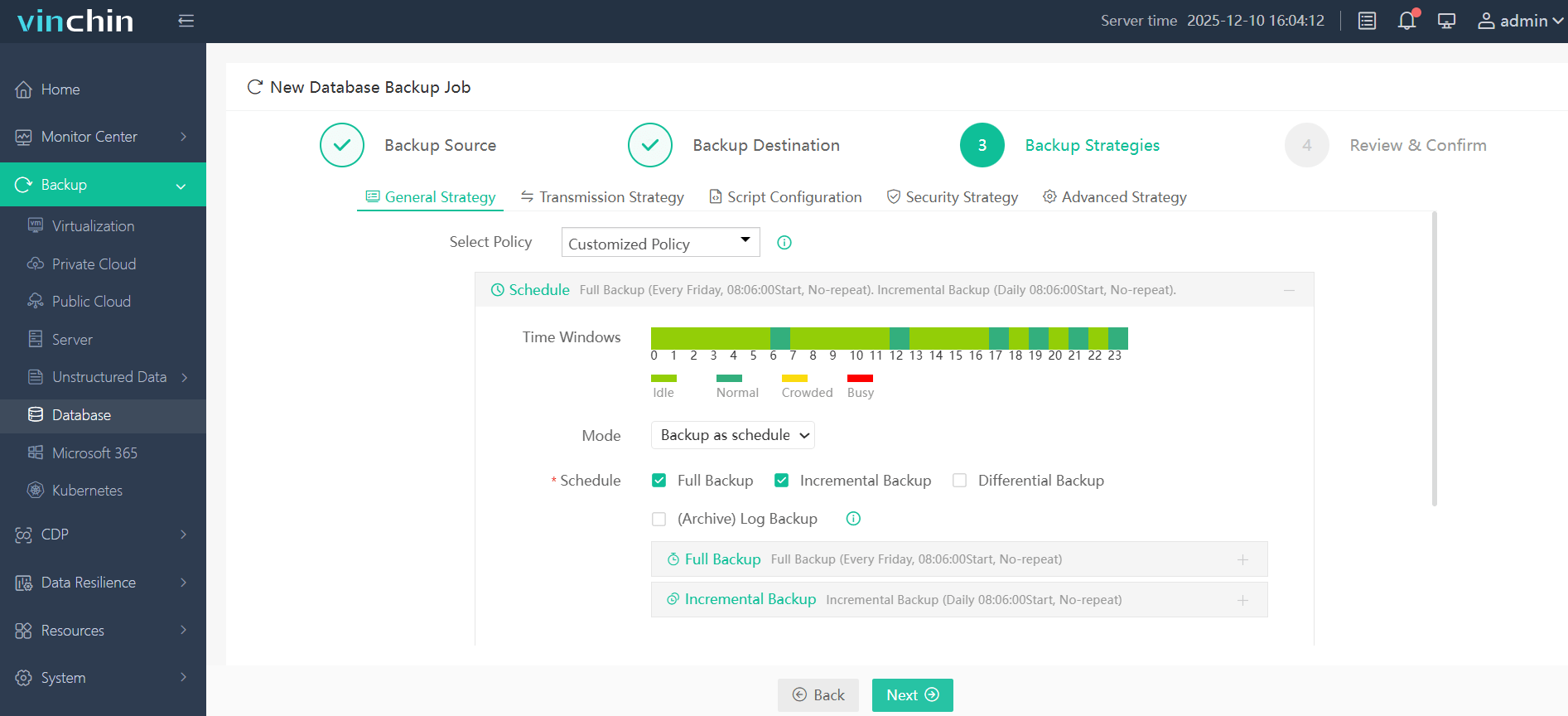

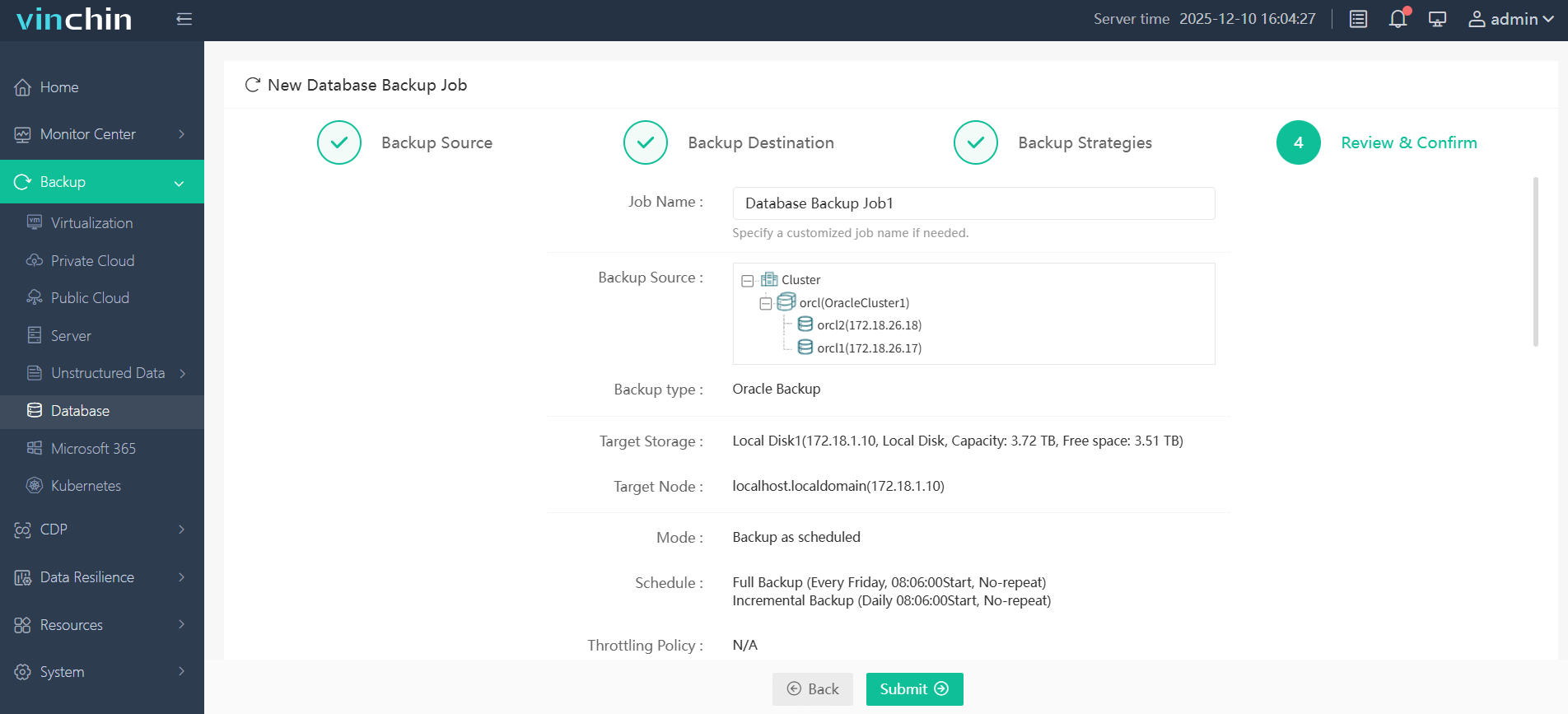

The intuitive web console makes protecting an AWS Oracle RDS database straightforward in just four steps:

Step 1. Select the Oracle database to back up

Step 2. Choose the backup storage

Step 3. Define the backup strategy

Step 4. Submit the job

Vinchin Backup & Recovery enjoys global recognition among enterprises seeking trusted data protection solutions—with top ratings worldwide—and offers a risk-free 60-day trial so you can experience its full feature set firsthand by clicking download below.

AWS Oracle RDS RMAN Backup to S³ FAQs

Q1: Can I restore an RMAN backup stored in my Amazon S³ bucket directly onto another managed RDS instance?

A1: No—you must first restore onto an EC²-hosted standalone Oracle installation before migrating data back into any other managed service instance if needed later.

Q2: What’s required if my organization mandates encrypted backups uploaded from my production environment?

A2: Attach additional KMS-related permissions (kms:Encrypt, etc.) alongside core s³ actions within assigned IAM policy linked against target DB instance role.

Q3: How do I schedule recurring automatic uploads without manual intervention?

A3: Place upload logic inside PL/SQL procedure then schedule it via DBMS_SCHEDULER—or trigger externally through Lambda/infrastructure automation tools at desired intervals.

Conclusion

Backing up AWS Oracle RDS databases natively using either RMAN or Data Pump gives strong control over what gets protected—and where it lives long term—in scalable cloud storage like Amazon S3 buckets tailored per project needs.

For even greater automation plus advanced monitoring/reporting capabilities,

Vinchin provides robust solutions trusted globally by enterprises seeking peace-of-mind around critical data protection tasks!

Share on: