-

What is self managed Kubernetes?

-

Why choose self managed Kubernetes?

-

Self managed vs managed Kubernetes

-

How to install self managed Kubernetes with kubeadm

-

How to install self managed Kubernetes with kops

-

How to maintain a self managed Kubernetes cluster?

-

Protecting Your Cluster with Vinchin Backup & Recovery

-

Self Managed Kubernetes FAQs

-

Conclusion

Kubernetes is now essential for deploying modern applications at scale. But when you need to run clusters, you face a choice: managed or self managed Kubernetes? This guide explains what self managed Kubernetes means, why it matters, how it compares to managed services, how to install it step by step, how to keep your cluster healthy—and how to protect your workloads with confidence.

What is self managed Kubernetes?

Self managed Kubernetes means you install, configure, and operate your own clusters instead of letting a cloud provider do it all. You manage every layer—from etcd databases and API servers in the control plane down to worker nodes running containers. This approach gives you full flexibility over networking rules, storage options, security settings—even which Linux distribution runs underneath.

You decide exactly how your environment works. But this also means you take responsibility for setup, upgrades, scaling—and fixing problems when they arise.

Why choose self managed Kubernetes?

Choosing self managed Kubernetes gives you unmatched control over your infrastructure. You can tailor every component—operating system versions, container runtimes like containerd or CRI-O, network plugins—to fit strict compliance needs or unique workloads. Many organizations prefer this route if they run on-premises data centers or want hybrid cloud setups spanning multiple providers.

But there’s a trade-off: all management tasks fall on your team’s shoulders. You handle installation scripts and troubleshoot errors yourself instead of relying on vendor support channels.

Some teams pick self managed kubernetes because:

They need custom integrations unavailable in hosted platforms

They want freedom from vendor lock-in

Their business requires strict data residency controls

Is this extra work worth it? For many IT operations teams who value flexibility above convenience—it absolutely is.

Self managed vs managed Kubernetes

Let's break down these two approaches so you can make an informed decision:

Managed Kubernetes services automate most operational tasks—control plane provisioning, patching nodes behind the scenes—even auto-scaling resources based on demand. This reduces maintenance headaches but often limits deep customization or forces reliance on one provider's ecosystem.

With self managed kubernetes:

You customize every cluster component

You deploy anywhere: bare metal servers in your office or virtual machines across clouds

No single vendor controls your upgrade schedule—or access to features

But expect:

More complex day-to-day operations

Responsibility for patching vulnerabilities fast

Need for strong internal expertise

How to install self managed Kubernetes with kubeadm

Kubeadm helps automate much of the cluster setup while still giving you hands-on control over configuration details—a great balance between ease-of-use and flexibility favored by many administrators today.

Prerequisites

Before starting installation:

Prepare at least one control plane node plus one or more worker nodes—all running supported Linux distributions such as Ubuntu 20.04+ or CentOS 7+.

Each node should have at least 2 vCPUs and 4GB RAM; production environments may require more depending on workload size.

Disable swap permanently:

sudo swapoff -athen remove any swap entries from/etc/fstab.Set unique hostnames (

hostnamectl set-hostname <name>) so nodes are easy to identify later.Ensure ports required by Kubernetes are open (6443/tcp API server; others vary by CNI).

Installation Steps

First update packages:

sudo apt-get update sudo apt-get install -y apt-transport-https ca-certificates curl

Add Google Cloud public signing key:

curl -fsSL https://packages.cloud.google.com/apt/doc/apt-key.gpg | sudo gpg --dearmor | sudo tee /usr/share/keyrings/k8s.gpg > /dev/null

Add repository:

echo "deb [signed-by=/usr/share/keyrings/k8s.gpg] https://apt.kubernetes.io/ kubernetes-xenial main" | sudo tee /etc/apt/sources.list.d/kubernetes.list

Install kubelet/kubeadm/kubectl:

sudo apt-get update sudo apt-get install -y kubelet kubeadm kubectl sudo apt-mark hold kubelet kubeadm kubectl

Initialize control plane node:

sudo kubeadm init --pod-network-cidr=192.168.0.0/16

(Replace pod-network-cidr if using another CNI plugin)

Set up local admin config:

mkdir $HOME/.kube || true sudo cp /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config

Install a network plugin such as Calico:

kubectl apply -f https://raw.githubusercontent.com/projectcalico/calico/v3.26.1/manifests/calico.yaml

Join worker nodes using join command output by kubeadm init. If lost: regenerate with kubeadm token create --print-join-command.

Check status after setup:

kubectl get nodes kubectl cluster-info

How to install self managed Kubernetes with kops

Kops streamlines creation of production-grade clusters—especially popular among AWS users—but also supports other clouds like GCP via community projects.

Prerequisites

Before launching kops-based clusters ensure you have:

An AWS account with IAM permissions sufficient for EC2/S3/VPC/DNS changes,

AWS CLI installed/configured,

Registered DNS domain name,

S3 bucket created in same region as intended cluster,

Latest stable versions of kops, kubectl, jq installed locally,

Optionally Ansible if following playbook-driven approach (not mandatory).

Installation Steps

Clone demo repo if following playbook method (optional):

git clone https://github.com/x-cellent/kubernetes-cluster-demo.git && cd kubernetes-cluster-demo/ ansible-playbook kops-installation.yml # optional step if using Ansible automation

Or download/install latest kops directly:

curl --location "https://github.com/kubernetes/kops/releases/download/v1.x.y/kops-linux-amd64" --output kops && chmod +x kops && sudo mv kops /usr/local/bin/

export PATH=$PATH:/usr/local/bin/

export KOPS_STATE_STORE=s3://<your-s3-bucket>

export AWS_ACCESS_KEY_ID="YOUR_KEY"

export AWS_SECRET_ACCESS_KEY="YOUR_SECRET"

export NAME=mycluster.example.com # replace accordingly!

kops create cluster --zones=us-west-2a ${NAME}

kops update cluster ${NAME} --yes

# Wait several minutes...

kops validate cluster # confirms readiness!

kubectl get nodes # verify connectivity!Configure Route53 hosted zone via AWS CLI if not already done (aws route53 create-hosted-zone). Update DNS records at registrar level so clients resolve API endpoint correctly during bootstrap phase.

To delete everything cleanly later: kops delete cluster ${NAME} --yes.

How to maintain a self managed Kubernetes cluster?

Running your own Kubernetes cluster requires ongoing maintenance—not just the initial setup. You’ll need to perform regular updates, monitor resource usage, scale capacity as needed, enforce strong security practices, and back up critical data to ensure fast recovery when issues occur.

1. Plan Your Upgrades

Use tools like kubeadm upgrade plan to understand safe upgrade paths. Always test upgrades in a staging environment before applying them to production.

2. Monitor Cluster Health Continuously

Use metrics-server together with Prometheus/Grafana dashboards to track CPU, memory, and network usage across nodes and pods. Set up alerts so anomalies are detected immediately.

3. Scale Based on Demand

Adjust worker node counts based on workload patterns. You can do this manually using kubectl cordon/drain/delete or automate the process with scripts triggered by load metrics.

4. Prioritize Security

Enable and enforce RBAC (enabled by default), review audit logs regularly, rotate secrets and certificates, and restrict pod privileges using PodSecurity standards (or PSP if you’re on an older version).

5. Back Up Critical Data

Take frequent etcd snapshots and store Deployment, Service, ConfigMap, and Secret YAML manifests outside the cluster to maintain a reliable disaster recovery plan.

Protecting Your Cluster with Vinchin Backup & Recovery

After building and maintaining a stable Kubernetes environment, protecting your workloads becomes essential for ensuring business continuity and meeting compliance requirements. Vinchin Backup & Recovery provides an enterprise-grade solution designed specifically for end-to-end Kubernetes backup and recovery across diverse infrastructures and use cases.

Its key capabilities include full/incremental backups; fine-grained protection at the namespace, application, PVC, and individual resource levels; policy-based scheduling and on-demand jobs; encrypted transmission with WORM protection; and cross-cluster or cross-version restore—including migrations across different storage backends. High-performance, multithreaded PVC backup acceleration with configurable concurrency further boosts efficiency.

Together, these capabilities enable reliable automation, flexible recovery options across heterogeneous environments, strong data security, optimized storage usage through compression and deduplication, and smooth integration into hybrid-cloud strategies.

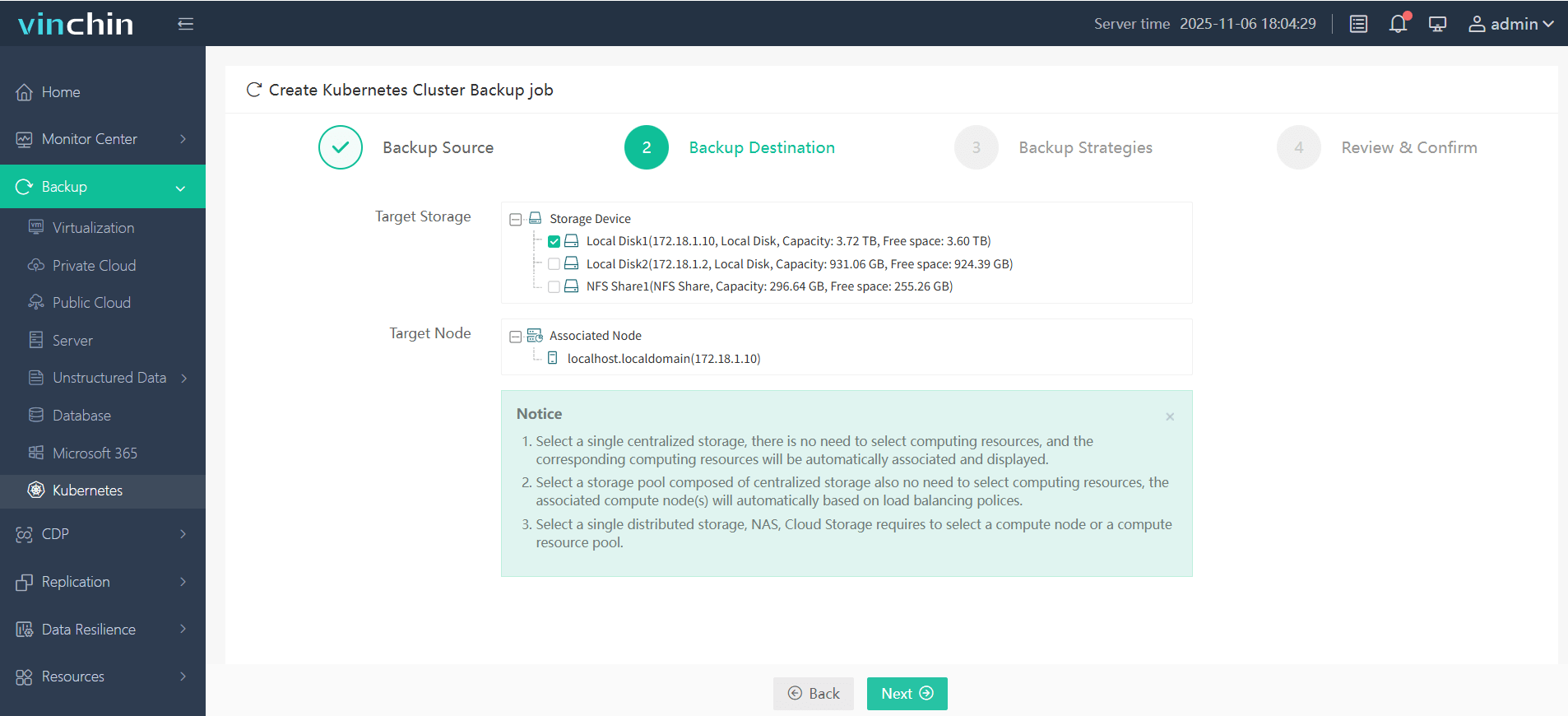

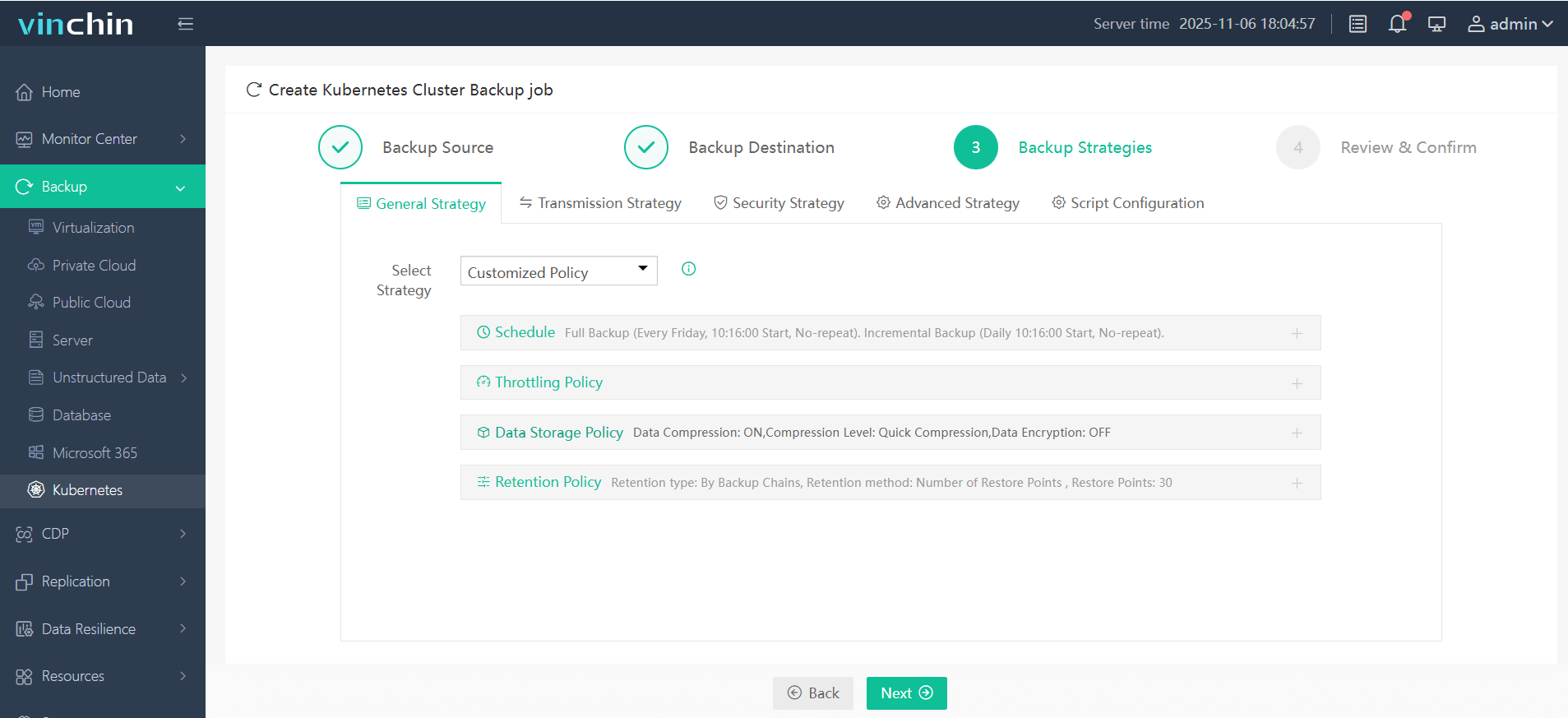

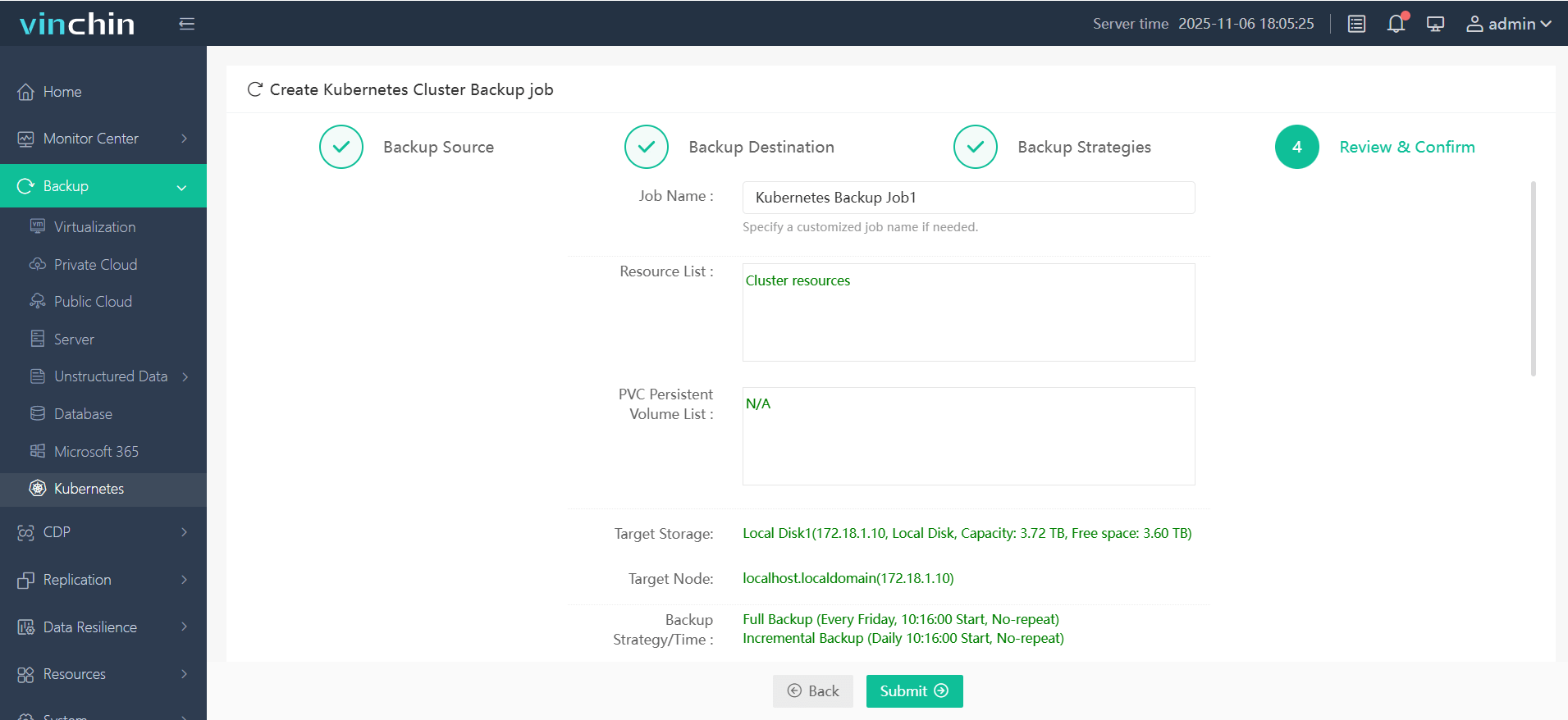

The intuitive web console of Vinchin Backup & Recovery simplifies backup operations into four clear steps tailored specifically for Kubernetes environments:

1. Select the backup source

2. Choose backup storage

3. Define backup strategy

4. Submit the job

Recognized globally by enterprises large and small—with top industry ratings—Vinchin Backup & Recovery offers a fully featured free trial valid for 60 days so you can experience its power firsthand before making any commitment.

Self Managed Kubernetes FAQs

Q1: Can I migrate my existing Docker Compose apps into a new self managed kubernetes environment?

A1: Yes—you’ll need to convert Compose files into equivalent YAML manifests using tools like Kompose then deploy them via KUBECTL APPLY commands targeting desired namespaces.

Q2: What should I do if my etcd database becomes corrupted?

A2: Restore etcd from recent snapshot file stored offsite then restart affected control plane components sequentially until API server responds normally.

Q3: How do I enforce multi-factor authentication (MFA) access controls?

A3: Integrate external identity providers supporting MFA through OIDC connectors configured within KUBERNETES API SERVER startup flags.

Conclusion

Self managed kubernetes puts power—and responsibility—in your hands.You gain ultimate flexibility but must invest time mastering installation,troubleshooting,and ongoing care.With careful planning,you’ll build resilient clusters tailored perfectlyto business needs.Vinchin makes protecting those investments simple.Try their solution todayfor peace-of-mind backups that just work!

Share on: