-

What Is Kubernetes?

-

Why Use Kubernetes

-

Understanding Kubernetes Architecture

-

What Are Core Components of Kubernetes?

-

How to Deploy a Local Kubernetes Cluster with Minikube?

-

How to Deploy a Local Kubernetes Cluster with Kind?

-

Choosing Between Minikube and Kind

-

Protecting Your Cluster with Vinchin Backup & Recovery

-

Kubernetes 101 FAQs

-

Conclusion

Kubernetes has changed how we deploy applications in today’s IT world. But what exactly is it? How do you get started if you’re new? This Kubernetes 101 guide explains everything from core ideas to hands-on cluster setup. If you’re an operations administrator ready to dive in—or just curious—read on for clear steps that build your skills from beginner to advanced.

What Is Kubernetes?

Kubernetes is an open-source platform that automates deploying, scaling, and managing containerized applications. It was created by Google engineers who wanted a better way to run containers across many machines without manual effort. Now maintained by the Cloud Native Computing Foundation (CNCF), Kubernetes lets you run containers on a group of computers as one unified system.

Instead of starting each container yourself or worrying about failures, Kubernetes handles these tasks automatically. It keeps your apps available—even if some parts fail—and helps them scale up or down as needed.

Why Use Kubernetes

Why do so many organizations rely on Kubernetes? The answer comes down to automation, reliability, and flexibility. With Kubernetes:

Failed containers restart automatically.

Workloads spread across machines for better performance.

Updates roll out with little downtime.

Apps scale up or down based on demand.

You can run workloads anywhere—from laptops to public clouds.

This means less manual work for administrators and more uptime for users. Whether you manage small test environments or large production systems, Kubernetes adapts to your needs.

Understanding Kubernetes Architecture

Before diving into components or deployment tools, it helps to see how everything fits together in a typical cluster architecture. At its core, a Kubernetes cluster brings together several nodes—some act as controllers (the brains), while others run your actual workloads (the muscle).

The control plane manages the overall state of the cluster using several services that talk through APIs and store data centrally in etcd—a distributed key-value store. Worker nodes receive instructions from the control plane; they host pods that contain your running containers.

Imagine this flow: You submit a deployment request via kubectl → kube-apiserver receives it → scheduler decides which node runs it → kubelet on that node launches your pod → kube-proxy routes network traffic as needed.

A simple diagram would show:

Control Plane: API server ↔ scheduler ↔ controller manager ↔ etcd

Worker Nodes: kubelet + kube-proxy + pods

All these pieces communicate over secure channels within your infrastructure—whether local or cloud-based.

What Are Core Components of Kubernetes?

To master kubernetes 101 basics, let’s break down its main building blocks into two groups: control plane components (the managers) versus worker node components (the doers).

Control Plane Components

These keep your cluster running smoothly:

etcd stores all configuration data reliably.

kube-apiserver acts as the front door; every command goes through here.

kube-scheduler decides which node should run each pod based on resources.

kube-controller-manager ensures things match their desired state—for example, restarting failed pods automatically.

Worker Node Components

These actually run your applications:

Node refers to any machine—physical or virtual—that joins the cluster.

kubelet runs on every node; it talks with the control plane and manages pods locally.

kube-proxy handles networking so services inside the cluster can talk securely.

Pod is the smallest unit you deploy; it can hold one or more tightly-coupled containers sharing storage/network resources.

For example: A multi-container pod might have both a web server container serving pages and a sidecar container handling logs—all sharing storage volumes within one pod definition file.

Each component plays its part so you don’t have to micromanage every detail—just describe what you want in YAML files or kubectl commands!

How to Deploy a Local Kubernetes Cluster with Minikube?

Minikube makes learning easy by creating a single-node cluster right on your laptop or desktop—it’s perfect for testing before moving workloads elsewhere. Here’s how you start:

First check hardware requirements: at least 2 CPUs available plus 2GB RAM free. Make sure virtualization support is enabled in BIOS if using VirtualBox or Hyper-V; Docker must be installed if using Docker driver instead.

1. Download Minikube from minikube.sigs.k8s.io

On macOS:

brew install minikubeOn Linux:

curl -LO https://storage.googleapis.com/minikube/releases/latest/minikube-linux-amd64thensudo install minikube-linux-amd64 /usr/local/bin/minikubeOn Windows:

choco install minikube

2. Install kubectl—the main command-line tool—from Kubernetes docs

On macOS:

brew install kubectlOn Linux:

sudo apt-get install -y kubectlOr download directly per instructions above

3. Start Minikube by opening Terminal then typing minikube start

This sets up everything automatically using either VirtualBox/Docker/etc., depending on what’s installed locally

4. Check status with minikube status—you should see Host/Kublet/API Server all listed as Running

5. Deploy an app! Try kubectl create deployment hello-minikube --image=kicbase/echo-server:1.0 then check progress via kubectl get pods

6. Expose your app outside the cluster by running minikube service hello-minikube—this opens browser access directly

7. When finished experimenting stop things cleanly with minikube stop, then remove everything using minikube delete

If something fails during startup (“virtualization not supported”), double-check BIOS settings or try switching drivers (--driver=docker).

Minikube gives you full admin access without risk—you can reset clusters anytime!

How to Deploy a Local Kubernetes Cluster with Kind?

Kind (“Kubernetes IN Docker”) lets you spin up clusters inside Docker containers rather than VMs—it’s lightweight yet powerful enough even for multi-node setups. Here’s how:

Check prerequisites first—you need Docker Desktop running before starting Kind! At least 2GB RAM free is recommended per test cluster created inside Docker itself.

1. Install Kind:

On macOS/Linux use Go toolchain:

GO111MODULE="on" go install sigs.k8s.io/kind@latestOr download pre-built binaries from Kind Releases page

2. Create a new local cluster by typing in Terminal:kind create cluster

By default this creates one control-plane node—but advanced users can define custom multi-node configs via YAML files later!

3. Check nodes are ready using:kubectl get nodes

Your new Kind-managed node(s) should appear immediately after creation finishes

4. Deploy an application—for example:kubectl create deployment hello-kind --image=nginx then monitor progress via:kubectl get pods

5. To access deployed apps externally use port-forwarding:kubectl port-forward deployment/hello-kind 8080:80 then browse http://localhost:8080/

6. When done testing remove everything cleanly:kind delete cluster

If errors occur (“Docker daemon not found”), confirm Docker Desktop is running first!

One tip when working with multiple clusters (e.g., both Minikube AND Kind): use kubectl config get-contexts plus kubectl config use-context <name> so commands target only one active environment at once—avoiding confusion!

Choosing Between Minikube and Kind

Both tools serve different needs—but which fits best? Let’s compare:

Minikube works well if you want VM-based isolation plus built-in dashboard features; it mimics real-world environments closely but may require more memory/disk space due to virtualization overhead (~20GB recommended disk space). It also supports GPU passthrough/testing advanced drivers easily.

Kind shines when speed matters—it uses less disk/RAM since each “node” lives inside lightweight Docker containers (~4–10GB disk per test environment). Multi-node topologies are easy too; just edit YAML configs before launching clusters! However some features needing direct VM access may not work identically compared with full hypervisor-backed setups found in Minikebe deployments.

Ask yourself:

Do I need fast disposable clusters mainly for CI/CD tests? Try Kind!

Want closer-to-production feel plus GUI dashboards? Choose Minikebe!

You can always experiment freely since both tools coexist safely—as long as only one runs at any given time due to shared ports/resources locally!

Protecting Your Cluster with Vinchin Backup & Recovery

After setting up local or production clusters, safeguarding critical data becomes vital against threats like loss, ransomware, or human error—which calls for robust backup solutions tailored for modern environments such as Kubernetes clusters. Vinchin Backup & Recovery stands out as an enterprise-level solution designed specifically for comprehensive protection of containerized workloads across diverse infrastructures.

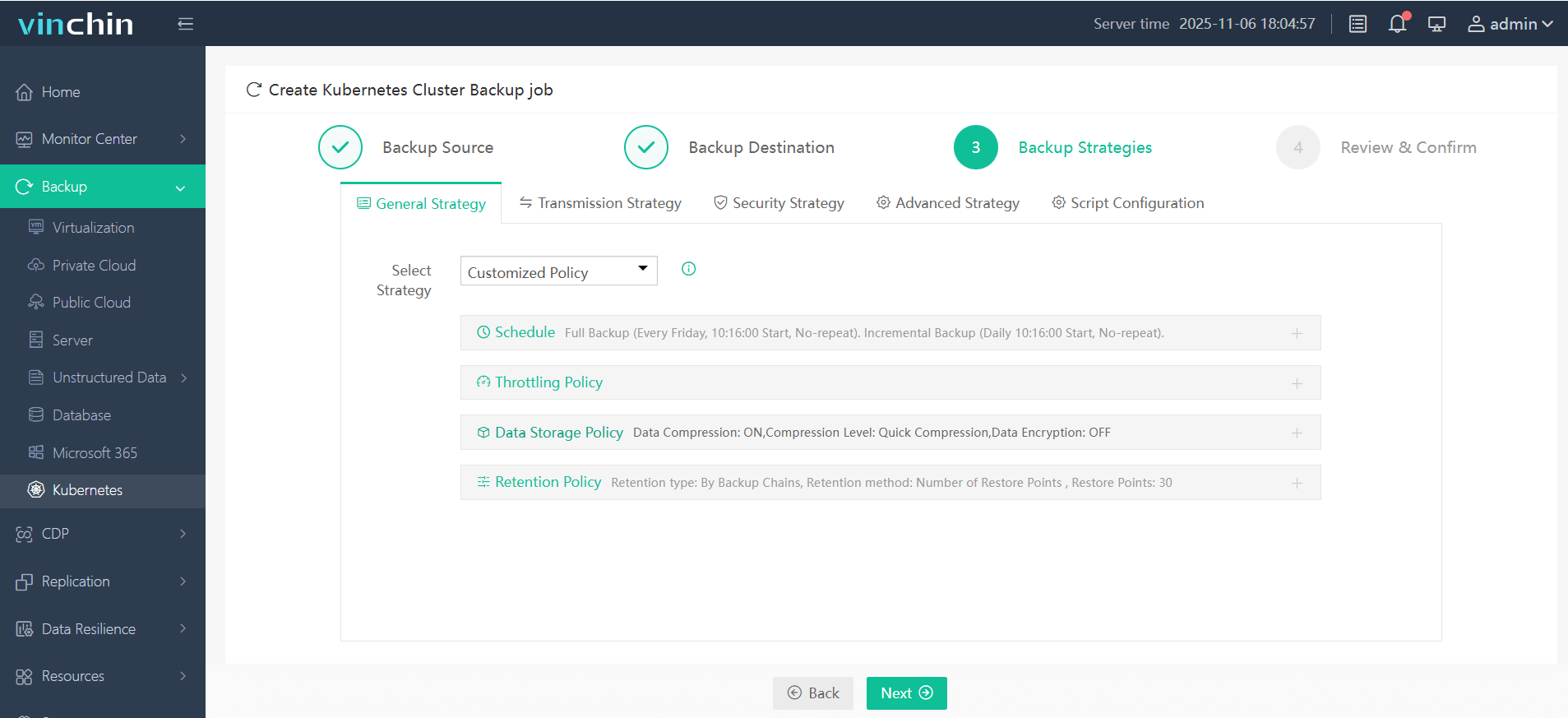

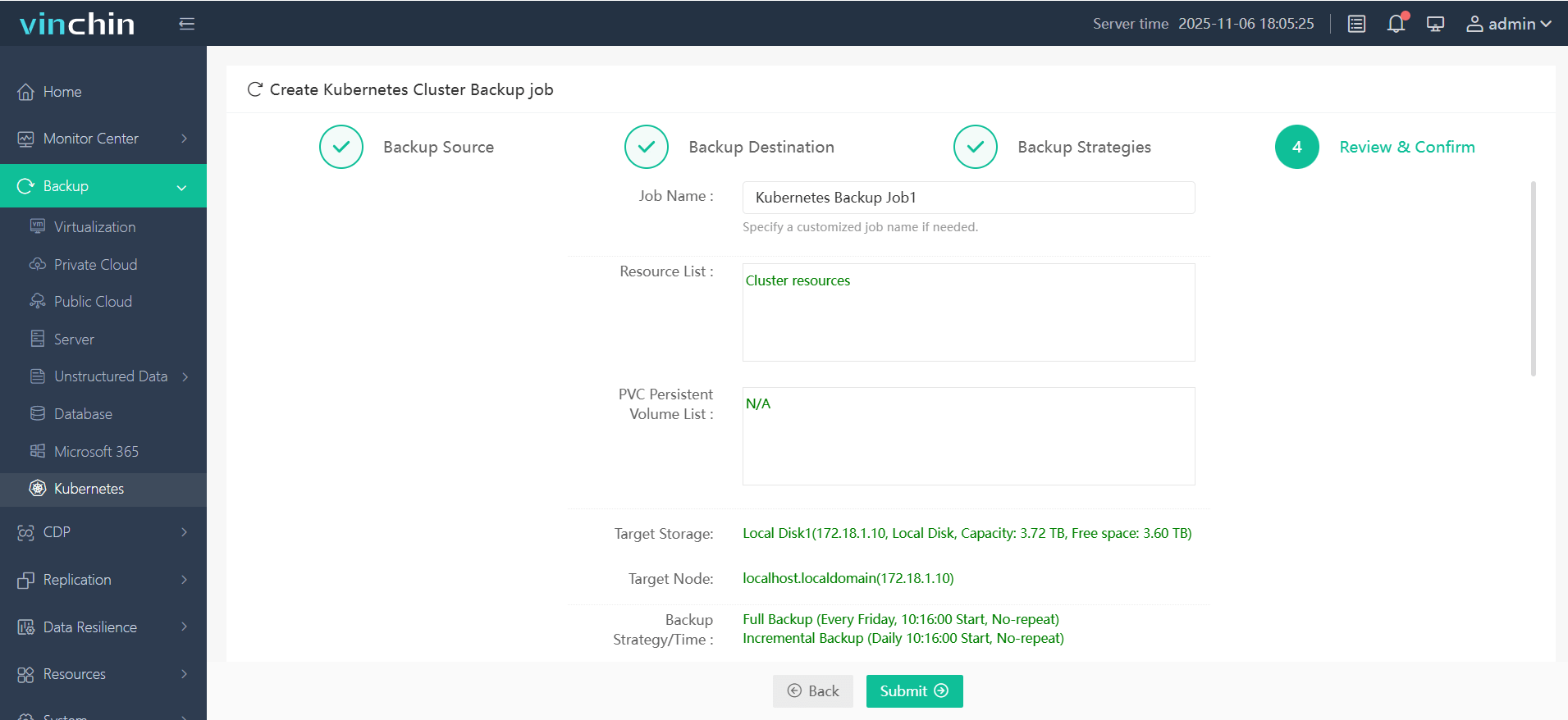

Among its extensive feature set are full/incremental backups, fine-grained restore options at various levels (cluster, namespace, application, PVC), policy-driven scheduling and retention management, cross-cluster/cross-version recovery capabilities—including migration between different storage backends—and strong encryption/compression technologies throughout backup workflows. Together these features ensure efficient data protection while minimizing operational complexity and maximizing business continuity during upgrades or incidents.

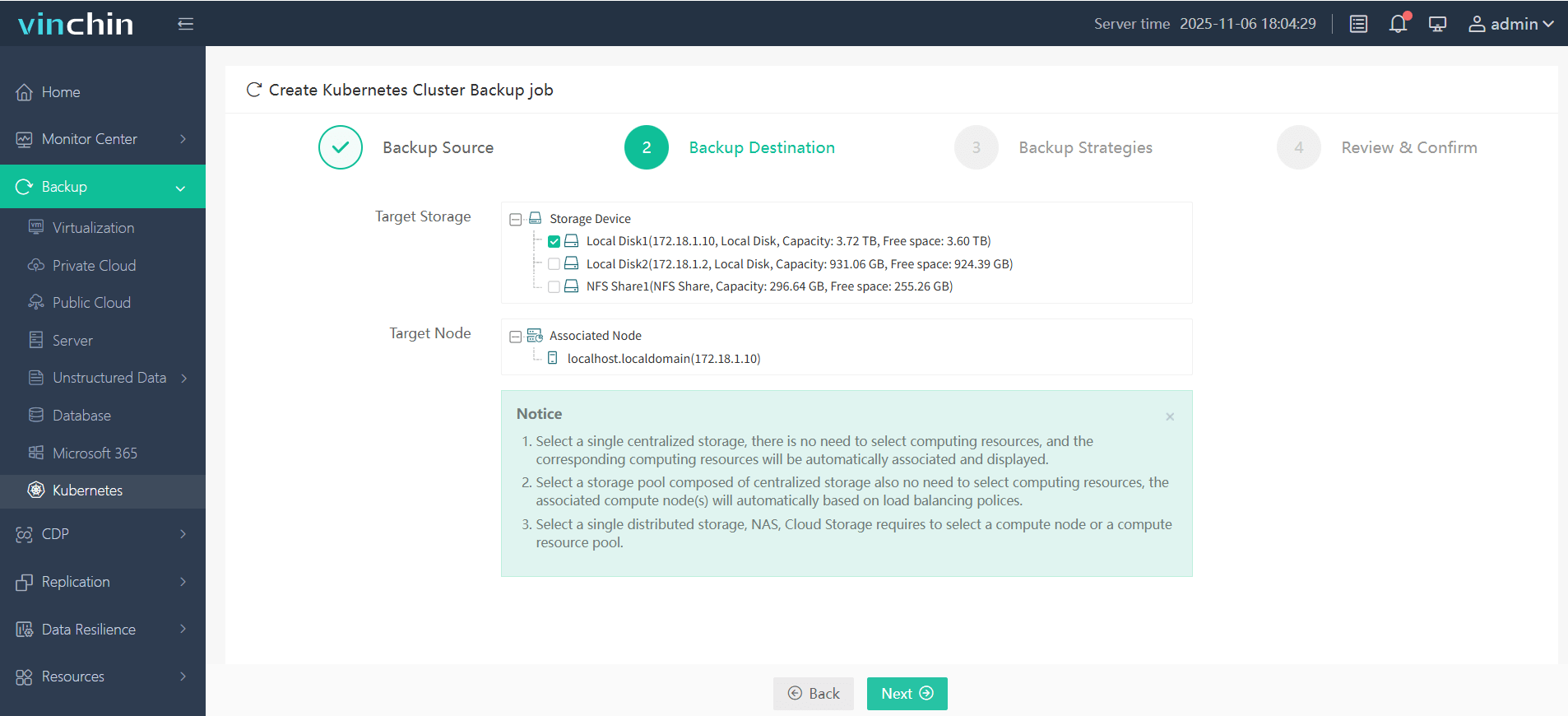

Vinchin Backup & Recovery offers an intuitive web console where backing up any Kubernetes environment typically takes just four steps:

Step 1 — Select the backup source;

Step 2 — Choose backup storage;

Step 3 — Define backup strategy;

Step 4 — Submit the job.

Trusted globally by enterprises of all sizes—with top ratings and thousands of customers worldwide—Vinchin Backup & Recovery delivers proven data protection excellence for mission-critical systems everywhere. Experience every feature risk-free with a full-featured 60-day trial; click below to download now!

Kubernetes 101 FAQs

Q1: How much disk space do Minikebe or Kind typically require?

A1: Plan at least 20GB free disk space for Minikebe VM images; Kind usually needs only 4–10GB per test environment due to lighter container footprint.

Q2: What should I do if my local cluster fails due to lack of resources?

A2: Increase allocated CPU/RAM via driver settings before restarting MINIKUBE START or KIND CREATE CLUSTER.

Q3: Can I switch between multiple local clusters easily?

A3: Yes—use KUBECTL CONFIG GET-CONTEXTS then KUBECTL CONFIG USE-CONTEXT <name> so commands target only one active environment.

Conclusion

With this kubernetes 101 guide under your belt—from architecture basics through hands-on deployments—you’re ready for real-world orchestration challenges ahead! For robust protection at any scale consider Vinchin's enterprise-grade solution today—and keep learning as technology evolves!

Share on: