-

What is Kubernetes PVC Backup?

-

Why Backup PVCs to S3?

-

Method 1. Using Velero for Kubernetes Backup PVC to S3

-

Method 2 Using Restic for Kubernetes Backup PVC to S3

-

Vinchin Backup & Recovery for Enterprise-grade Kubernetes Protection

-

Kubernetes Backup PVC to S3 FAQs

-

Conclusion

Data loss can strike your Kubernetes cluster at any time. A failed node, a bad deployment, or even a simple human error can put your persistent data at risk. That’s why backing up Persistent Volume Claims (PVCs) to S3 storage is essential for every operations team that values reliability. In this guide, you’ll learn how to perform Kubernetes backup PVC to S3 using proven methods—step by step.

What is Kubernetes PVC Backup?

A Persistent Volume Claim (PVC) in Kubernetes lets your applications store data that survives restarts or rescheduling of pods. Think of it as a request for durable storage within your cluster. Backing up a PVC means creating a copy of this data so you can restore it if disaster strikes—whether through snapshots or file-level exports depending on your tools and needs.

Backing up PVCs protects against accidental deletion, corruption, or infrastructure failure. Without backups, restoring critical application state becomes nearly impossible after an incident.

Why Backup PVCs to S3?

S3-compatible object storage has become a top choice for offsite backups—and with good reason. It offers high durability (often eleven nines), scalability on demand, and access from anywhere with an internet connection. By sending your Kubernetes backup PVC to S3:

You gain offsite protection against local failures.

Retention management becomes easy—you control how long backups stay.

Data restoration is possible across clusters or even cloud providers.

Costs remain predictable thanks to pay-as-you-go pricing models.

Isn’t peace of mind worth investing in robust backups?

Method 1. Using Velero for Kubernetes Backup PVC to S3

Velero stands out as an open-source tool designed specifically for backing up Kubernetes resources—including both configuration objects and persistent volumes—to S3-compatible storage targets like AWS S3 or MinIO.

Before starting with Velero:

Make sure you have a running Kubernetes cluster (v1.16+).

Prepare an S3 bucket with proper credentials.

Install both

kubectlandveleroCLI tools on your workstation.

Velero Backup Strategies: Snapshot vs. File-Level

Velero supports two main strategies when performing kubernetes backup pvc to s3:

Snapshot-based backups use underlying storage provider features (like AWS EBS snapshots). These are fast but depend on compatible storage classes.

File-level backups rely on agents like Restic or Kopia inside pods; these work regardless of storage backend but may take longer due to file copying overhead.

Choose snapshot mode if your cloud provider supports it—it’s faster and less resource-intensive—but fall back on file-level mode when working with unsupported storage types or when portability matters most.

Step-by-Step Commands

Step 1: Prepare Your S3 Bucket & Permissions

Create an S3 bucket dedicated for backups. Assign an IAM user or role permissions including s3:PutObject, s3:GetObject, s3:DeleteObject, and s3:ListBucket. Keep access keys secure; never hardcode them into public scripts!

Step 2: Install Velero with Helm

Add the official Helm chart repository:

helm repo add vmware-tanzu https://vmware-tanzu.github.io/helm-charts helm repo update

Create a values.yaml file containing:

configuration: backupStorageLocation: - bucket: <YOUR_BUCKET> provider: aws config: region: <YOUR_REGION> volumeSnapshotLocation: - provider: aws config: region: <YOUR_REGION> credentials: useSecret: true secretContents: cloud: | [default] aws_access_key_id=<YOUR_ACCESS_KEY> aws_secret_access_key=<YOUR_SECRET_KEY>

Install Velero using Helm:

helm install velero vmware-tanzu/velero --namespace velero --create-namespace -f values.yaml

Step 3: Enable File System Backups When Needed

If your volumes don’t support native snapshots—or you want portable backups—enable file-level mode by adding --use-node-agent during installation or setting defaultVolumesToFsBackup: true in your YAML config file.

Step 4: Annotate Pods For Targeted Backups

To specify which pod volumes should be backed up at file level:

kubectl -n <namespace> annotate pod/<pod-name> backup.velero.io/backup-volumes=<volume-name>

Alternatively, configure global defaults so all eligible volumes are included automatically unless opted out via annotation.

Step 5: Create Your First Backup Job

Run this command from your terminal:

velero backup create <backup-name> --include-namespaces <namespace>

Check progress anytime using:

velero backup describe <backup-name>

Your data now flows securely into the designated S3 bucket!

Step 6: Restore From Backup If Disaster Strikes

Restore everything from a previous snapshot by running:

velero restore create --from-backup <backup-name>

Monitor status here:

velero restore describe <restore-name>

Method 2 Using Restic for Kubernetes Backup PVC to S3

Restic is another powerful tool that excels at direct-to-S3 encrypted backups from inside containers—perfect if you prefer scripting over full-featured platforms like Velero or need fine-grained control over schedules via CronJobs within Kubernetes itself.

You’ll need:

An accessible S3 bucket plus API keys.

Base64 encoding utilities (

echo, etc.).Familiarity with basic YAML editing in CronJob specs.

Optimizing Restic for Large PVCs

When dealing with large persistent volumes:

Use Restic’s

--excludeflag within job arguments to skip non-essential files such as caches or temp directories.Adjust CPU/memory requests in CronJob specs so pods don’t get evicted mid-backup.

Monitor upload speed closely; consider enabling multipart uploads if supported by your object store.

Schedule jobs during off-hours when network traffic is low—for example at midnight UTC—to minimize impact on production workloads.

Step-by-Step Commands

Step 1: Set Up Your Bucket & Credentials

Create an exclusive bucket just for these backups; generate access keys granting only necessary permissions (PutObject, etc.).

Step 2: Store Secrets Securely

Encode secrets before storing them as K8s secrets (never expose plain text):

echo -n "<ACCESS_KEY>" | base64 # Save output! echo -n "<SECRET_KEY>" | base64 # Save output! echo -n "<RESTIC_PASSWORD>" | base64 # Save output! kubectl create secret generic s3-secret \ --from-literal=AWS_ACCESS_KEY_ID=<ACCESS_KEY> \ --from-literal=AWS_SECRET_ACCESS_KEY=<SECRET_KEY> \ --from-literal=RESTIC_PASSWORD=<RESTIC_PASSWORD> \ -n <namespace> # Replace placeholders above accordingly

Step 3: Deploy A Scheduled CronJob For Automated Backups

Clone sample manifests such as those found here. Edit fields like bucket name (BUCKET=), region (AWS_DEFAULT_REGION=), mount paths (mountPath:), then apply changes via kubectl:

kubectl apply -f cronjob-backup.yaml # Confirm creation using kubectl get cronjobs

Step 4: Initialize The Repository Once

Initialize only once per new repository location using this sequence (adapted from community guides):

kubectl -n <namespace> create job --from=cronjob/restic-backup restic-init --dry-run=client -o yaml | \

kubectl patch --dry-run=client -f - --type=json -p '[{"op": "replace", "path": "/spec/template/spec/containers/0/args", "value": ["init"]}]' -o yaml | \

kubectl apply -f -

# Check logs afterward using kubectl logs JOB_NAME_HEREStep 5: Trigger Manual Test Backups As Needed

Start ad-hoc jobs anytime by cloning existing CronJob templates but changing names so they run immediately instead of waiting until next scheduled interval:

kubectl -n <namespace> create job --from=cronjob/restic-backup backup-test kubectl logs JOB_NAME_HERE # Watch progress live!

Step 6: Prune Old Snapshots Regularly

Edit prune policies directly inside manifest files like cronjob-backup-prune.yaml. Apply updates periodically so old versions don’t accumulate indefinitely—saving costs while keeping recovery points fresh!

Step 7: Restore Data On Demand

Edit provided restore job templates (job-restore.yaml) specifying correct snapshot ID plus destination claim name; then launch restore process via kubectl apply command followed by log inspection until completion.

Vinchin Backup & Recovery for Enterprise-grade Kubernetes Protection

For organizations seeking streamlined enterprise protection beyond open-source tools, Vinchin Backup & Recovery delivers robust capabilities tailored specifically for Kubernetes environments. As a professional solution built for business-critical workloads, it enables full/incremental backups, fine-grained recovery at the cluster, namespace, application, and PVC levels, policy-driven automation alongside one-off jobs, cross-cluster/cross-version restores—even between heterogeneous clusters—and advanced security features such as end-to-end encryption and WORM protection. With high-speed multithreaded performance and flexible retention policies among its core strengths, Vinchin Backup & Recovery ensures reliable data safety while simplifying compliance across hybrid infrastructures—all managed through one unified platform.

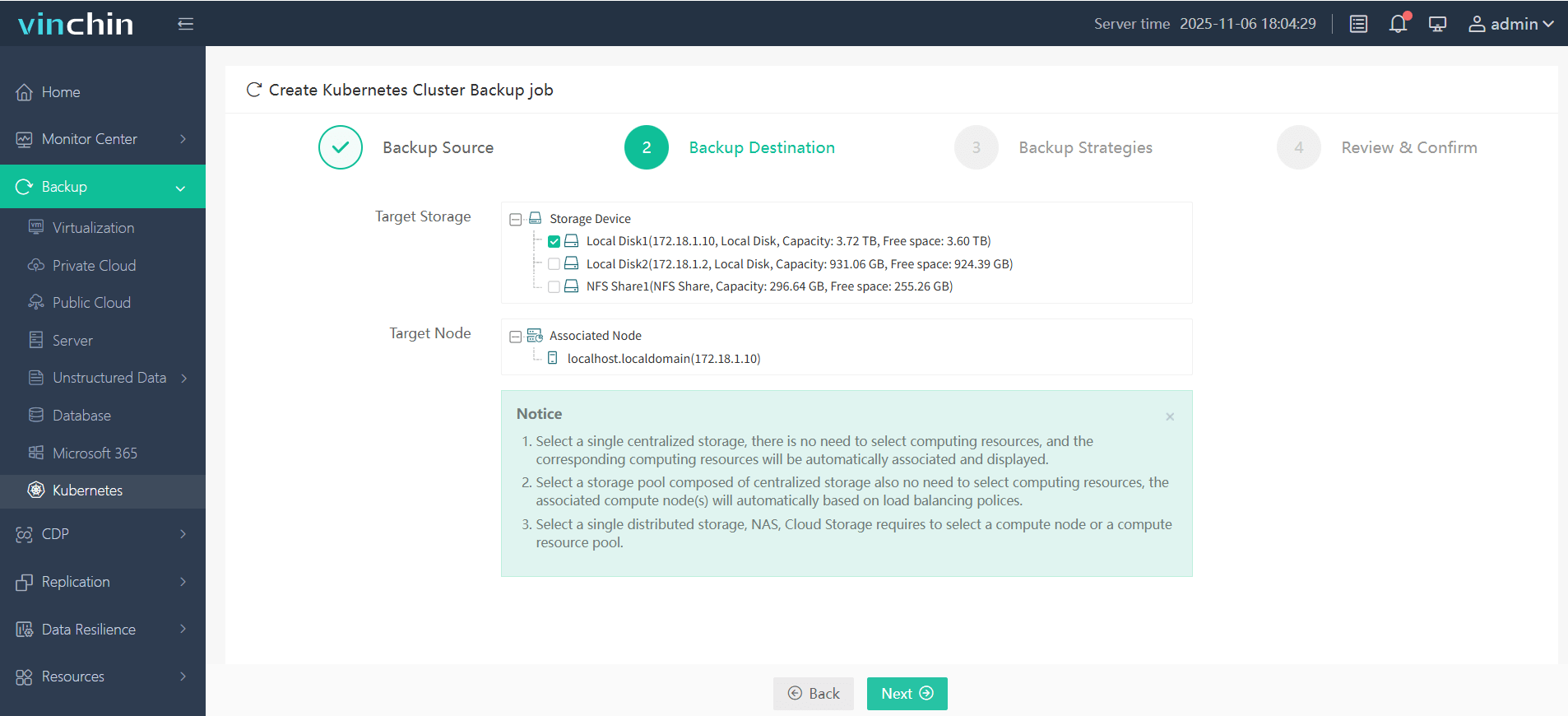

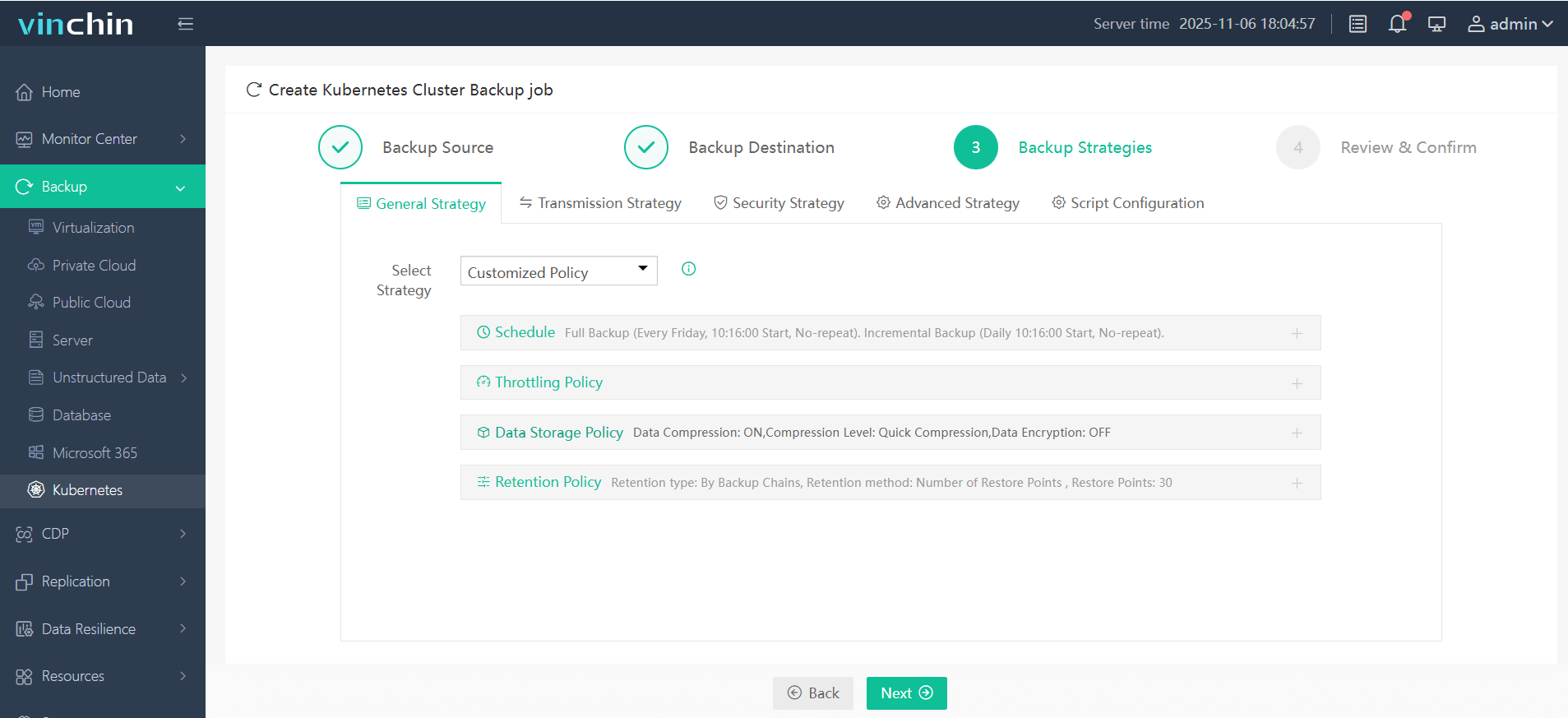

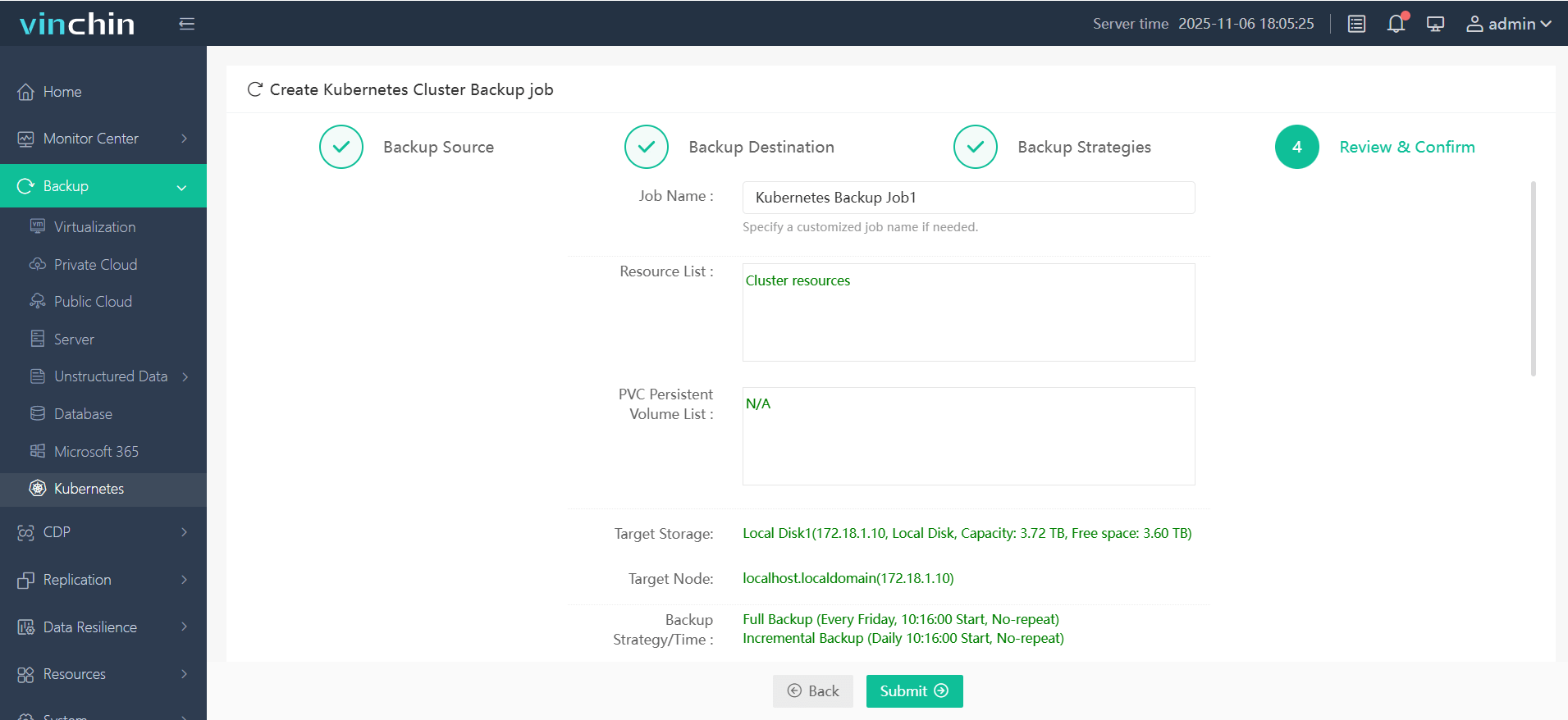

The intuitive web console makes protecting your Kubernetes workloads straightforward—just follow four steps:

Step 1. Select the backup source

Step 2. Choose the backup storage

Step 3. Define the backup strategy

Step 4. Submit the job

Vinchin Backup & Recovery is trusted globally by enterprises large and small—with top ratings from satisfied customers everywhere. Start today with a free full-featured trial valid for sixty days—click below to download instantly!

Kubernetes Backup PVC to S3 FAQs

Q1: How do I handle stateful app backups that require an ordered shutdown?

Use pre-backup hooks—such as Velero’s exec hooks—or Restic Jobs with init containers to ensure databases flush buffers and quiesce properly before snapshotting. This guarantees consistent, safe backups every time.

Q2: What’s the network latency impact during large-scale uploads?

Monitor transfer duration through velero backup describe and backup logs, compress data when possible, and schedule large jobs for off-hours. If supported by your object store, enable transfer-acceleration features—especially useful when your production clusters are geographically distant from the bucket region.

Q3: Can I encrypt my data myself before uploading instead of relying solely on cloud defaults?

Yes. Velero (using its crypto options) and Restic both support client-side AES-256 encryption. Make sure to store the passphrases separately in dedicated Secret objects, not together with your regular API credentials.

Conclusion

Protecting persistent data through regular Kubernetes PVC backups to S3 is essential for long-term business continuity—whether the challenge comes next week or next year, and no matter where your production clusters are running. With proper automation and periodic recovery testing, your disaster-readiness stays sharp while manual workload drops significantly. And for teams looking to simplify container-level protection even further, Vinchin provides an easier, more streamlined way to safeguard Kubernetes workloads.

Share on: